The future fraud stack: Why AI won’t replace rules or ML

Every few years, the fraud industry discovers a new technology that will supposedly change everything forever.

Vendors appear, conference panels become animated, and LinkedIn fills with confident predictions about the future of fraud detection. If you’ve worked in this space long enough, the pattern becomes easy to recognize.

We saw it when rules engines promised scalable fraud prevention for e-commerce.

We saw it again when machine learning arrived, supposedly rendering expert-drive rules obsolete.

There was even a brief time where blockchain was supposedly destined to make fraud a mathematical impossibility.

Today, the spotlight has shifted to LLMs and AI agents. While conversation is repeating itself, these claims have grown more dramatic. Current narratives suggest fraud specialists will disappear, alongside the rules and ML models they built. Some argue that AI will simply “take care of it.”

That never happens.

Fraud prevention has adopted many technologies over the last two decades. Each one added new capabilities and improved how we operate at scale, but none of them completely replaced the tools that came before.

Rules did not vanish when machine learning became mainstream in fraud detection systems. Rules did not eliminate manual review teams, even in highly automated fintechs. Similarly, LLMs will not replace either of them in the foreseeable future.

The practitioner’s reality

The reason is simple once you step back from the hype cycle: fraud prevention is not one problem that can be solved with one algorithm. It is a collection of operational challenges that require different tools, different processes, and different expertise.

Once you see it that way, technology debates start sounding like vague futurism rather than know-how grounded in real practitioner experience. Think about a carpenter building furniture in a workshop. They do not debate whether a hammer will replace a saw or whether a drill eliminates the need for a marking knife. Each tool performs a different task, and the combination of tools enables the craft.

The emergence of LLMs does not suddenly invalidate these capabilities. Instead, it introduces a new type of tool that improves how existing tools are used. But to understand why, we first need to revisit how modern fraud systems actually worked before the emergence of AI.

Modern Layered Fraud Defense Strategy

When people talk about layered fraud defenses, they often mean monitoring users throughout their lifecycle. A user might be evaluated during signup, again during account activity, and later during payments or withdrawals. This type of layering focuses on where detection occurs in the user journey.

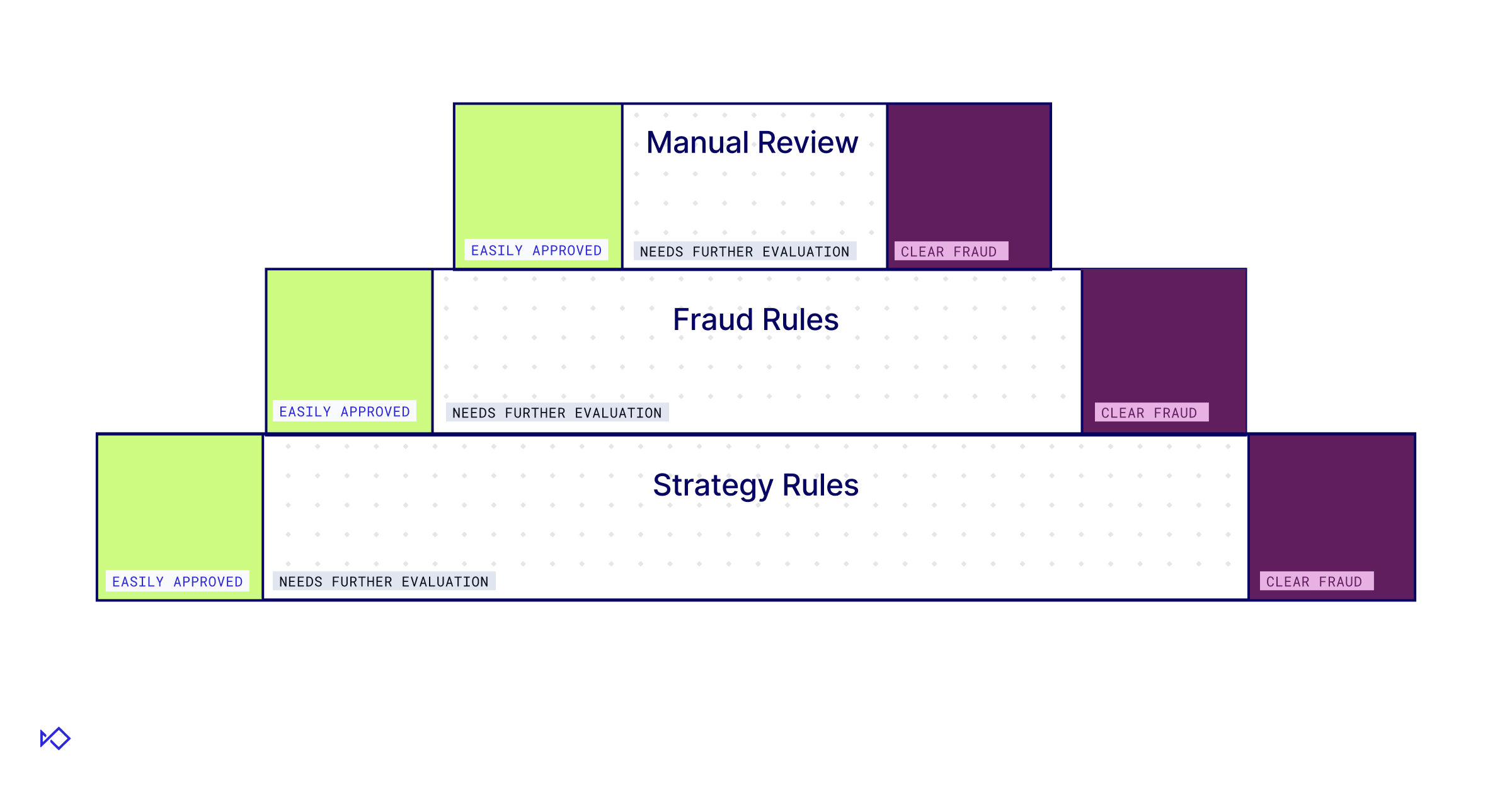

But there is another type of layering that matters just as much: layering different detection approaches around the same event. This is the architecture most mature fraud programs eventually converge on. A typical fraud defense system contains three core layers that solve distinct operational problems:

The first layer is machine learning models. These analyze large sets of behavioral and transactional signals to produce statistical risk scores. This layer provides the scale needed to score millions of transactions per day, something no human team could manage safely at global volumes.

The second layer is expert-driven rules. These rules encode fraud patterns discovered by analysts, allowing teams to react quickly to new attacks. When done right, rules provide the precision to target specific patterns that models might miss or haven’t been trained on yet.

The third layer is manual review. Human investigators analyze the complex or ambiguous cases that automated systems cannot resolve reliably. This layer provides the judgement, incorporating external context and operational knowledge that algorithms simply lack.

Most mature fraud programs eventually adopt this layered architecture because it balances automation, accuracy, and operational cost.

But once that structure is in place, a different question emerges: how does AI actually fit into this system? Are we seeing the emergence of a fourth layer?

Not quite.

The manual processes behind fraud systems

Comparing LLMs and Agentic AI to existing detection approaches reveals their “superpower” quickly. Agents excel at solving problems that have many conditions and edge cases. Up until now, these problems were hard to automate with rules or ML, meaning they would be delegated to humans, who are the most expensive and constrained resource in our layered defense.

LLMs prove to be efficient in automating such tasks. But what are these tasks?

When people think about manual work in fraud prevention, they usually think about case investigations. They picture analysts reviewing suspicious accounts, checking supporting documentation, and making final decisions about risky events. This is the most visible manual process in most fraud teams, but investigations are only half of the story.

Behind every automated fraud detection system lies another set of manual processes that are far less visible. These processes revolve around the research, development, and maintenance of automated defenses.

Rules do not write themselves. Machine learning models do not train themselves. Analysts and data scientists need to research fraud patterns, build detection logic, test their performance, deploy them to production, and monitor their effectiveness over time. This research and development process is extremely labor-intensive. Fraud analysts spend countless hours exploring datasets, identifying patterns, writing rules, evaluating model performance, and investigating anomalies in production systems.

In other words, manual work in fraud prevention exists in two forms:

- Case-level investigations: Analysts review individual events to determine whether they represent fraud.

- R&D for automated systems: Teams design and maintain the rules and models that power detection at scale.

Once you recognize these two manual processes, the role of AI - and specifically Agentic AI - becomes much clearer. But does that mean that AI is going to replace fraud teams themselves?

Why humans still matter

With these new capabilities, it is natural to ask whether Agentic AI would replace fraud specialists rather than the technologies they use. In practice, there are several reasons why this outcome remains unlikely.

One challenge involves reproducibility and consistency. Machine learning models and rule engines produce deterministic outputs given the same inputs. LLMs, by contrast, can generate slightly different responses when presented with identical prompts. This variability makes it difficult to use them as the sole decision engine in high-stakes environments.

Another challenge involves trust and accountability. Fraud detection systems often operate within regulated industries such as banking and payments. Organizations must be able to explain why specific decisions were made and ensure that their detection systems comply with regulatory requirements.

For that reason, human oversight over Agentic AI remains essential, especially for overcoming hallucination risk and reproducibility challenges. This also means that agentic systems needs to be built for transparent operations from the ground up.

It’s not enough for an agent to spit out a result - they are required to exhibit all sources they used, the data analysis steps they went through, and acknowledge any contradicting or missing data. Without these, agentic systems remain a black box almost as ML models.

Finally, fraud itself is an adversarial problem. Fraudsters constantly experiment with new techniques designed to exploit weaknesses in detection systems. Experienced analysts often recognize subtle behavioral patterns that automated systems might overlook. These human insights remain a critical component of effective fraud prevention.

The role of AI, therefore, is not replacing fraud professionals but amplifying their capabilities across the stack.

Agentic AI and manual review: the investigator copilot

Manual review has always been a bottleneck in fraud prevention operations. Investigations are time-consuming and require specialized expertise from a fragmented landscape of internal and external sources.

A typical investigator might spend twenty minutes searching public databases, inspecting merchant websites, and cross-referencing transaction histories before they even begin to make a decision. Much of this work involves gathering and synthesizing information rather than applying judgement. This is exactly where LLMs provide meaningful assistance.

AI agents can automatically collect relevant data from internal systems and OSINT sources. They can summarize transaction histories, highlight suspicious patterns, and compile contextual information. This allows analysts to start an investigation with a much richer dataset already assembled.

Consider a merchant onboarding investigation as an example: an AI agent could analyze the merchant’s website, identify inconsistencies in product descriptions, and check whether the business address appears in other suspicious applications.

Instead of spending twenty minutes gathering data, the analyst can focus on interpreting the results and making the final decision. While Agentic AI does not eliminate the need for human investigators, it does help with decreasing the workload. AI agents can automatically resolve common, repetitive cases that match familiar fraud patterns.

For the remaining complex investigations, Agentic AI improves how analysts work. Instead of assembling the dataset behind a case, investigators can now focus on interpreting it and applying judgment.

Agentic AI and rules: scaling expert logic

Expert-driven rules remain one of the most effective tools in fraud prevention. When designed properly, they capture highly specific fraud patterns with exceptional precision and are easier to modify than machine learning models.

However, maintaining these rules requires continuous effort. Fraud patterns evolve quickly, and rules degrade over time as attackers adapt their behavior. Analysts need to spend significant time:

- monitoring performance

- identifying emerging patterns

- adjusting existing logic to maintain accuracy

This process involves significant analytical work. Analysts must explore large datasets to identify patterns that correlate strongly with fraud. They need to test potential rule conditions, evaluate false positive rates, and determine whether a rule will remain effective as fraudsters adapt.

LLMs accelerate these efforts by acting as an exploratory data analysis assistant. Analysts can provide datasets to a model to surface correlations that might warrant a new rule, identifying patterns that would have otherwise required hours of manual pivoting.

Similarly, LLMs can help analyze the performance of existing rules. If a rule’s false positive rate begins increasing, an AI system can analyze the underlying dataset and suggest specific modifications that might restore its accuracy. Analysts then review those suggestions and decide whether they make sense operationally.

But this does not mean LLMs will suddenly become expert fraud analysts. Human practitioners still understand not only the context behind fraud patterns, but also the business context in which they operate. Part of that is maintaining the delicate balance between system security and business growth. Another part is judging when skewed datasets might lead to the wrong conclusions.

However, by accelerating the research and analysis process, LLMs can significantly increase the output of a small fraud team.

Instead of spending hours manually exploring datasets, analysts can now focus on evaluating the most promising ideas.

Agentic AI and machine learning: the R&D force multiplier

Machine learning models have become the backbone of modern fraud detection systems. They provide scalable scoring across large datasets and can capture complex relationships between multiple behavioral signals.

This means that building effective fraud models is far from trivial. Development requires:

- feature engineering

- data labeling

- algorithm experimentation

- continuous performance monitoring

all of which demand significant time and specialized expertise.

AI helps accelerate these development cycles. For example, LLMs assist with feature engineering by identifying meaningful patterns in textual or unstructured data that traditional models might overlook. They also allow data scientists to explore new segmentation strategies or pinpoint specific subpopulations where a model’s performance has begun to drift.

Another critical application is model explainability. Because machine learning models often function as black boxes, analysts frequently struggle to understand why a specific transaction received a high risk score. LLMs help translate these complex outputs into human-readable explanations, making it easier for analysts to investigate and validate suspicious events.

This transparency improves both internal analysis and external communication. Customer support teams can better explain decisions to users who were incorrectly declined, while fraud analysts can more easily identify and patch weaknesses in model performance. Product teams, in turn, gain a clearer understanding of how fraud controls impact the customer experience.

Once again, the role of LLMs is not replacing the model itself. Instead, it improves the processes surrounding the model’s development, maintenance, and interpretation.

The future fraud stack

The emergence of Agentic AI does not signal the end of rules, machine learning, or manual investigations. Instead, it represents the next stage in the evolution of fraud prevention systems.

Rules will continue to provide targeted logic for specific fraud patterns. Machine learning models will continue to deliver scalable risk scoring across large datasets. Human investigators will still handle complex cases that require contextual reasoning and judgement. These pillars aren’t going away.

However, AI is now beginning to run vertically through all of these layers, improving how each one operates.

As it assists analysts during investigations and accelerates the research behind rules and models, Agentic AI is already shifting how teams operate. Fraud departments are starting to achieve higher output with fewer resources, allowing practitioners to move away from manual data synthesis and toward solving the most challenging, adversarial problems.

The future fraud stack is not a choice between technologies or humans; it is the effective combination of both. In this system, AI acts less like a new layer and more like a force multiplier that runs through the entire stack.

.avif)

.avif)