The old saying is “If you have a hammer, everything looks like a nail.”

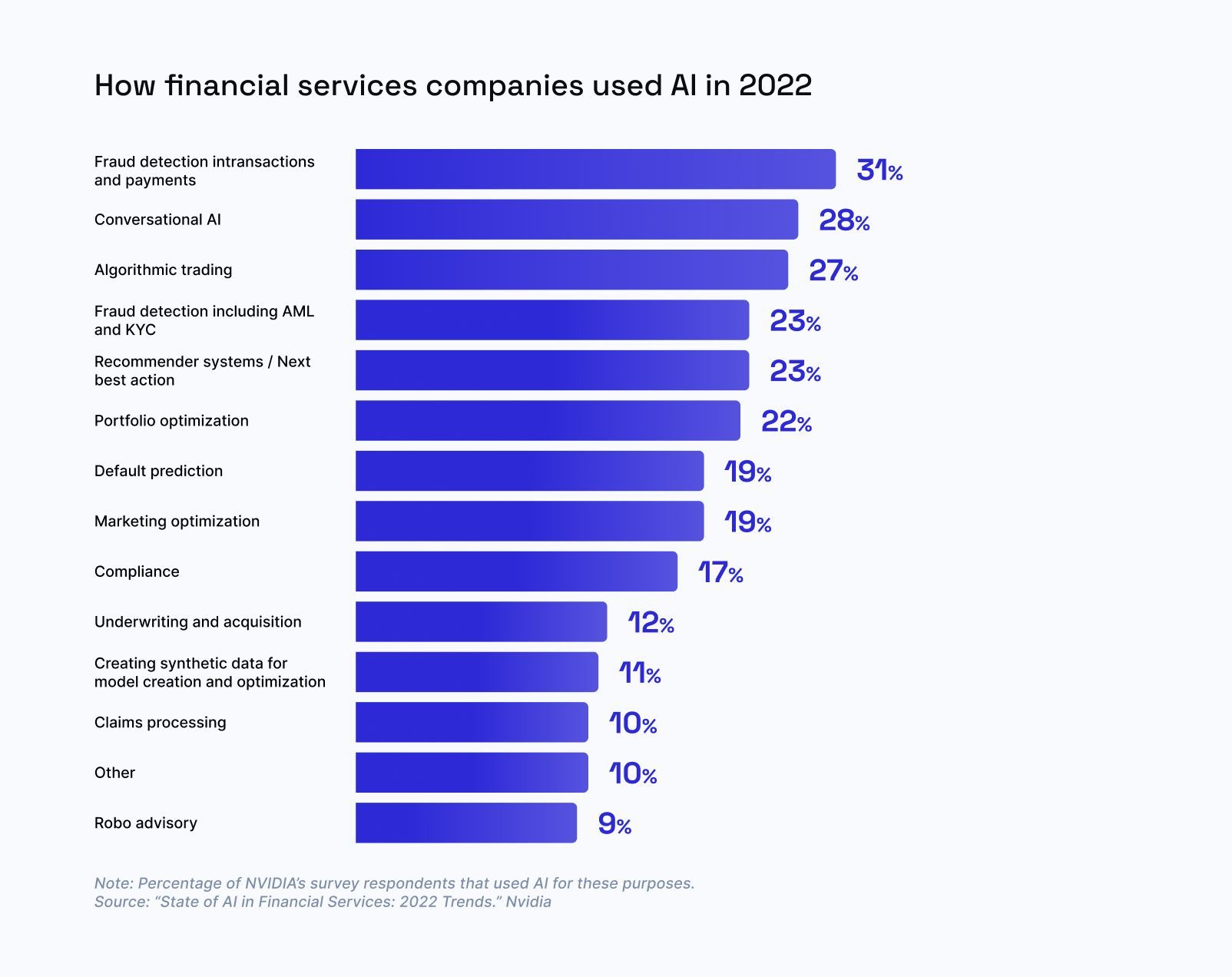

GenAI is the new hammer, and everything in Fintech is the nail. Perhaps with good reason, because in Financial Services the single largest use case for AI today is in Fraud, Risk and Compliance.

This post examines where GenAI adds value in fraud, risk and compliance use cases, and how we’re thinking about it at Sardine.

Generative AI could be a useful tool for our industry, but to become that we have to understand why it is different to existing approaches.

In this article, I’m going to differentiate between –

(1) machine learning (supervised or unsupervised): when we say machine learning, we are referring to supervised learning techniques like logistic regression, decision trees, gradient boosted decision trees; or unsupervised learning techniques like k-Nearest Neighbors (kNN) clustering.

(2) neural networks like Convolutional Neural Networks (CNNs) or Long Short Term Memory Models (LSTMs): are a subset of machine learning techniques that mimic the human brain via an input layer, a series of hidden layers and finally an output layer. Each layer consists of multiple units, and each unit mimics a neuron (you transform an input via a linear transformation function). You typically train a neural network by adjusting the weights of its connections to minimize a predefined loss function (via a technique called back propagation).

(3) generative AI e.g. chatGPT, autoGPT: are an extension of neural networks, that have been trained to create realistic new content e.g. stunning realistic looking images (midJourney) or write coherent content resembling human created literature (chatGPT). Generative AI techniques often utilize a combination of two neural networks working in competition with each other in a technique called Generative Adversarial Networks (GANs) – one neural network is trained to create new content (generator) and the other one distinguishes between the generated samples and real ones (discriminator).

First, I’ll offer a short intro to Sardine, to contextualize the applications of AI/ML in our domain.

What is SardineAI (or Sardine in short)?

Sardine is a behavior-infused risk and payments platform. Focus on Behavior biometrics (how you type, swipe, scroll, move the mouse, hold the phone, etc) is what differentiates us from the other fraud and risk providers out there.

We offer three products today:

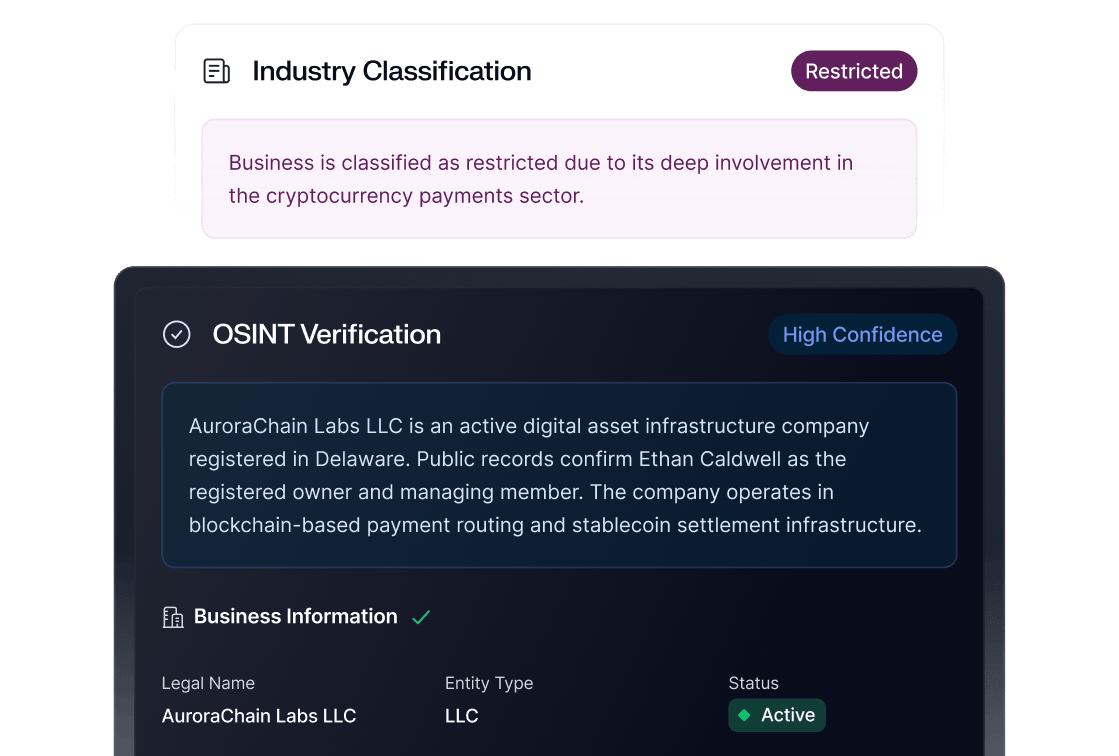

Risk: We offer a unified API for all the fraud & compliance needs for any financial services company including fintechs, banks, BaaS, payment processors, high-risk industries including gift card exchanges, online gaming/gambling, cannabis payments & crypto. re.

The fraud & compliance use cases we cover include:

- KYC and Identity fraud at account opening

- Payment fraud during the purchase flow, or when you are funding a wallet with cards or bank transfers

- Account takeover fraud at logins

- Phishing and social engineering scam detection anytime during the user journey

- Issuing fraud to detect card testing/enumeration attacks or flag stolen/unauthorized card use

- Sponsor bank OS: an operating system for sponsor banks to manage KYC/AML and fraud policies across their fintech portfolio

Payments: We offer payments in regulated industries where bundling fraud indemnification & KYC/AML gives us the edge. Industries we cover today include: crypto on-ramping via ACH in the US and Cards in 80+ countries including US & EU/UK.

SardineX: We just recently announced the launch of our SardineX consortium that allows banks, fintechs, payment processors and networks working collaboratively to detect ACH fraud, Authorized Push Payment (APP) scams and augment their Suspicious Activity Reports (SARs) with information from other participating institutions under the provisions of 314(b).

Fraudsters are the biggest users of GenAI today

Given that fraud prevention is one of our core use cases, GenAI has really huge implications. Often, fraudsters are the first ones to adopt any new technologies and never before have they had access to such a powerful tech so easily.

Generative AI "generates" new outputs as a response to a prompt or input. It might output video, images or text. When combined with deep-fakes (a separate but related subject). This creates new possibilities for fraudsters like:

- Video cloning in romance scams: We are already seeing fraudsters create convincing videos of attractive but unreal people, and scam folks in online dating apps, and set them up for the classic romance scam bait – e.g. get the unsuspecting victims to send them money. 70% of people were unable to tell a real voice from a scammed voice in a recent survey.

- Voice cloning in family scams: We are increasingly seeing fraudsters clone someone's voice and call up a family member, pretending to be in distress and ask for money. In this example a woman received a call from what appeared to be her daughter in distress.

- Voice cloning in account takeovers: Voice based authentication is not reliable anymore for authentication into a bank or financial services industry. As it is very easy to take a few seconds of someone’s voice and train a GenAI model to answer any questions during the bank login process. In this example fraudsters cloned the voice of a company director to steal $35m in Dubai.

Extrinsic AI vs Intrinsic AI:

All of us lead increasingly public lives online and hence our voice, photos and videos of anyone can be easily found online and hence cloned by AI – we call this Extrinsic AI.

We think the future of fraud prevention will be a battle of bots:

“Extrinsic AI vs. Intrinsic AI”

Intrinsic AI are models trained on behavior intrinsic to us – how we type, swipe, scroll, move the mouse, or hold the phone. This data is not publicly or easily available today and hence fraudsters can not train models to mimic you. Intrinsic AI is essentially the bet we are taking at Sardine. As such, we infuse user’s behavior into fighting fraud.

For example, if voice authentication can no longer be relied upon as a bank second factor authentication method, does that mean that technology is completely dead? Not quite. If we augment voice authentication with intrinsic AI that was trained on behavior signals.

For example – before using voice authentication, did the fraudster copy/paste your password whereas you normally use a password manager to auto-fill; or was the speed with which the fraudster typed your password different than how you normally do?

This framing of Extrinsic vs Intrinsic doesn’t require the word AI either.

For example, one of the easiest ways to protect yourself and your family from being scammed by one of those voice family scams, is to share a secret phrase over a secure medium (e.g. when you meet in person, or over an encrypted chat app) that you all agree upon – this secret phrase is “intrinsic” and hence can not be guessed by AI.

To GenAI or not?

So you have GenAI as a hammer, do you use it on every nail you find? If you are a financial services company, you probably have similar needs as Sardine. But not every problem needs GenAI. Let’s dive in!

(1) Machine learning use cases

Machine learning (supervised and unsupervised) is infused throughout our risk platform. Machine learning works very well in cases where we have labeled data. Fraud prevention lends itself very well to that, because if someone stole your payment method (your card number, your cheque or your bank account details), you will eventually notice and file a chargeback with your card issuer or the bank. This allows us to train supervised models like logistic regression, decision trees, gradient boosted decision trees, etc. The dirty little secret in fraud prevention has been that with logistic regression or catboost, you can get 99% of the ways there.

The other secret in the world of fraud prevention – machine learning is actually commoditized. And what truly differentiates Sardine from every other fraud player, is that we produce our own data via our Device Intelligence & Behavior Biometrics SDK (DI/BB) or that we are constantly procuring the best-in-class data from new sources. Often we even convince companies large and small to realize the value of the data they are sitting underneath and help them build a case for productizing it. For example, it took me 6+ years to procure an esoteric data source after pounding my head against the wall for it and asking everyone under the sun if they could build it.

- Identity fraud: We use supervised machine learning models to risk-score a user at account opening to identify the likelihood that the user is using their own identity or a stolen/synthetic identity.We use a variety of unsupervised and supervised models to identify risky devices that are typically used by fraudsters – devices in a data center, true device or a fake one (scripts, bots, emulators).We’ve built machine learning models to classify IP addresses as proxies/VPNs vs. not, or residential vs. commercial.

- Payment fraud: We use supervised machine learning models to risk-score a payment method (card or bank) to identify the likelihood that the user is using their own payment method or someone else’s.

- Account takeover fraud: We use machine learning to build behavior biometrics profiles for users for how they type, or how they hold their phones. This allows us to answer the question of whether it is the same user logging into a bank account or is it someone else who picked up their phone and is logging in.

- AML/CTF Transaction monitoring: One of the newest areas that’s finding an application of machine learning is AML/CFT transaction monitoring, where AML = Anti Money Laundering and CTF = Counter Terrorism Financing

A short primer on how regulated financial institutions detect money laundering or terrorism financing and how Sardine helps them:

- Sardine provides a rule bank with 100s of common money laundering or terrorism financing typologies e.g. is someone moving large amounts of money by structuring them i.e. breaking them into chunks just under $10k thresholds, so they are below the radar?

- Compliance operations team and BSA/AML officers then review flagged alerts, and use our network graph tool and user transaction search tools to identify if there are multiple users connected to a suspected user via shared devices or shared IPs – i.e. they identify a ring.

- Finally, they file a SAR with their financial regulator e.g. FINCEN in the US or FCA in the UK.

- Then they wait – usually they never hear back from the regulator (and that’s what makes this process highly suboptimal because of the lack of true ground truth).

For AML/CTF, the most optimal way to train a supervised ML model would have been to get feedback data from the financial regulator i.e. did this SAR that I filed with you lead you to arrest someone? In the absence of true feedback data, we can only strive for the next best i.e. we train a model that surfaces suspicious money laundering transactions based on prior feedback from the compliance ops team (step 3) i.e. did this alert lead to a SAR being filed?

(2) Neural network use cases

- Documentary KYC and Liveness checks: Neural network models like convoluted neural networks are highly useful to solve for problems like:

- Detecting bounding box to find the corners of a passport, and extract the face from the passport

- Compare the face between the passport and the face during a liveness check

- Account takeovers: Neural networks like Long Short Term Memory (LSTMs) are great to look at a sequence of events and classify if that’s normal or anomalous. For example, here’s a sequence of events that’s typical of a bank customer being socially engineered:

- For a customer, the bank sees a new login from a brand new device-id or device fingerprint and a brand new IP (Sardine’s DI/BB SDK provides this info)

- Bank sees that the password was copy/pasted whereas in the past, for this account, the password is always auto-filled by the browser (Sardine’s DI/BB SDK provides this type of info)

- Finally, you see a large withdrawal going out of the customer’s account into a brand new counterparty (new bank/SWIFT counterparty, or new email/phone number for Zelle/P2P transfers).

Identifying these types of event sequences is what LSTMs are really great at!

(3) Generative AI use cases:

Most of the use cases above that are well suited to machine learning or neural networks, utilize data with no or very little structure e.g. transactions, events, network packets, etc.

Generative AI is really great at

- Problems involving data that has a lot of structure e.g. text.

- Automating administrative tasks that robotic process automation (RPA) could not because there are too many variables

A mental model is to think about LLMs a very fast college educated student. Enhanced with a context (like help docs, or a vector database of proprietary data), it can much more accurately support fraud and compliance use cases.

We’ve identified the following use cases where generative AI can be beneficial to our Risk platform or Payments product:

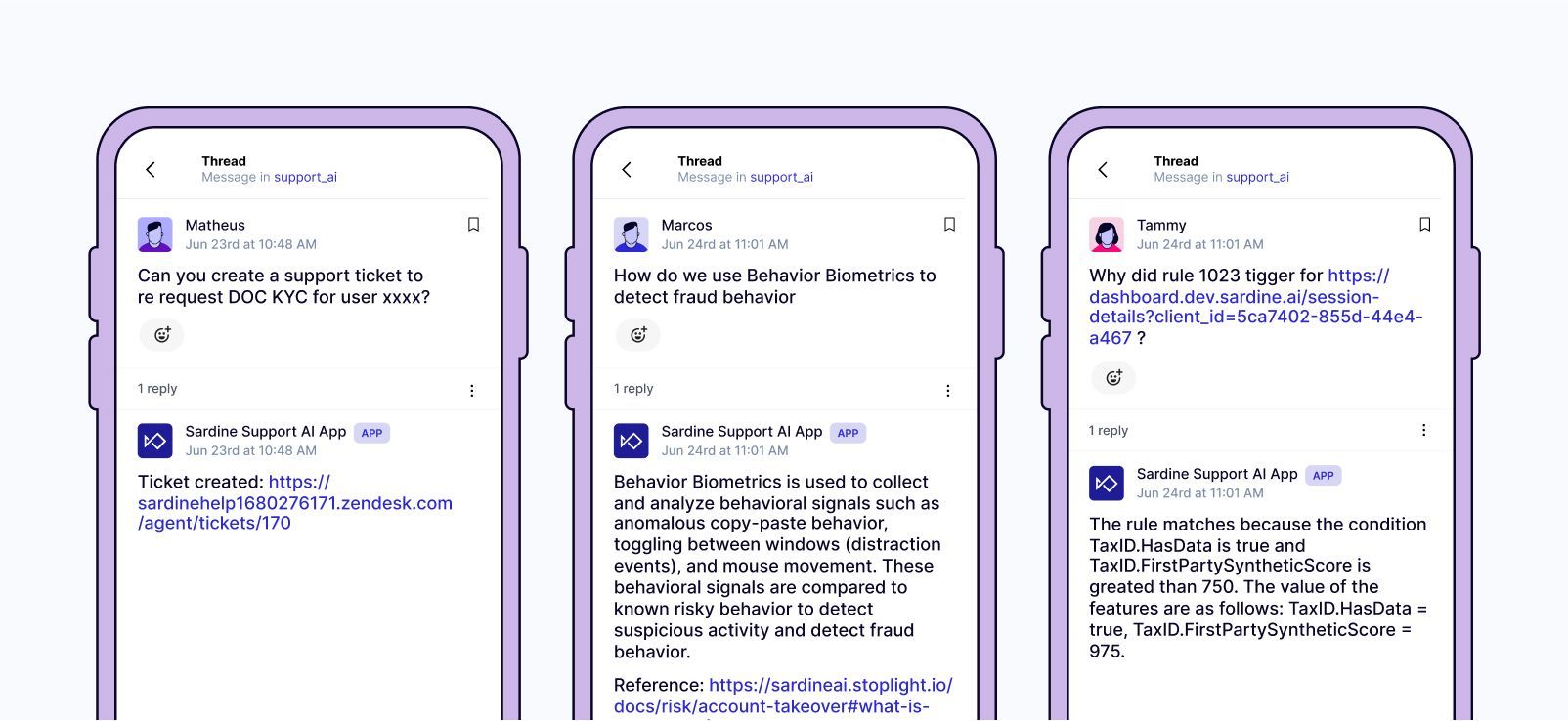

- Support tools: Last week during our Hackathon, the project that won the Grand prize using openAI gpt-3.5 model and automate to answer common support questions that our integration, support or fraud/compliance ops teams spend hours answering. Many of these questions are repetitive and have already been asked by someone else. We trained a GenAI model with our own knowledge base from our docs site, notion pages, gong calls, etc. Additionally, capability extract from our data warehouse to answer risk investigation questions. And we created a slack chat bot that our customers can use to do the following:

- Understand what various features in our fraud or compliance scoring mean?

- Explain why Sardine marked a user or transaction as risky?

- Automatically create a new zendesk ticket to either further investigate a user or require step ups like doc KYC

- Card Chargeback and ACH returns handling: In our payments product, we often have to dispute the card chargebacks or ACH returns we get from our processors or banks, to protect ourselves from friendly fraud – when we have strong reasons to believe that the customer had indeed made the transaction. This is an application really well suited to autoGPT as most of the work here is repetitive.For example, let’s consider a customer who has been transacting with our crypto on-ramp for several months and we have prior history for the device-identifiers and IP-addresses they use. However, all of a sudden, we get a card chargeback or an ACH return for a recent purchase. The counter dispute or the Proof of Authorization (POA) for this would involve:

- Comparing the current device-id with previous device-ids

- Comparing the current IP with previous IPs

- If those are the same, then prefill the chargeback dispute form or POA form

- Product marketing and Sales enablement: One of the most recent big pain points we’ve faced as a company has been on surfacing all the knowledge base about various products into succinct product marketing memos. While hiring product managers, we focus on finding those who come from the fraud/compliance domain and know how to execute (not necessarily how to market their product) because we first and foremost want to find folks who can talk the talk and walk the walk.This was colored by my prior experiences whereby it’s very rare to find someone who excels at both – heads down execution and product marketing. We are super excited to have a new head of marketing, Eduardo Lopez join us last week. However, as that search was taking way too long, we were considering training GPT 3.5 with all our Notion pages, Gong customer calls, 1000s of Slack channels, Zendesk tickets to create the best product marketing copy.

- SAR narrative generation: This is an area where a combination of GPT 3.5 and autoGPT makes for a killer use case. Given a set of users that have been identified by a BSA/AML officer as belonging to the same ring, auto-create the narrative by looking at their transaction activities and how these users are linked to each other.

Conclusion

GenAI is one of the most transformative technologies that we’ve come across in a long time. And it has huge implications for financial services industries in general and companies like ours that provide fraud prevention or compliance tools.

While fraud prevention does become a lot harder as fraudsters begin to use GenAI, it is also a great opportunity for us to develop intrinsic AI to fight what’s coming!