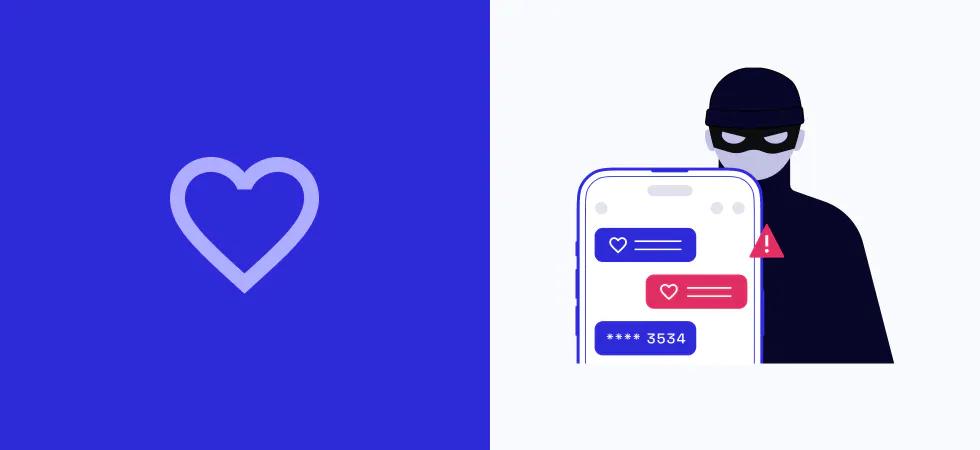

Last year, 70,000 people reported to the Federal Trade Commission that they'd been the victim of a romance scam. Their losses totaled $1.3 billion.

So what are Romance Scams and how is AI part of the problem?

Romance scams occur when a criminal adopts a fake online identity to gain a victim’s affection and trust. The scammer then uses the illusion of a romantic or close relationship to manipulate and/or steal from the victim.

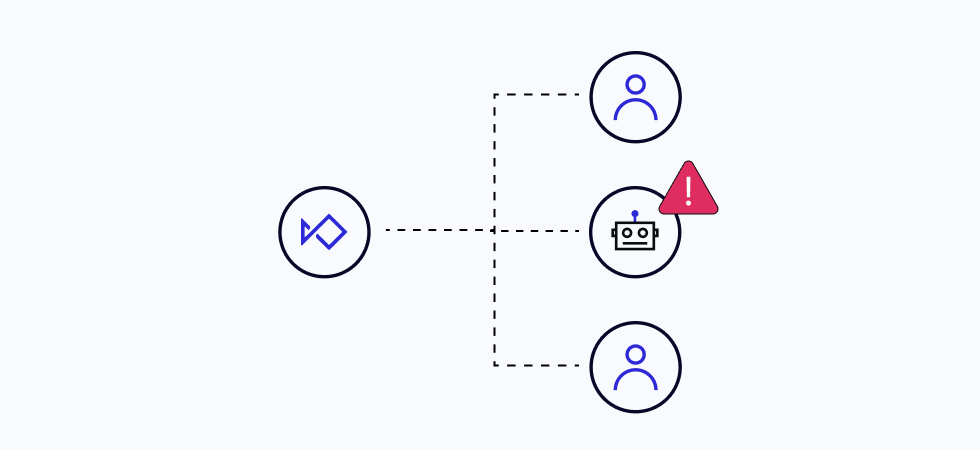

Scammers have begun to take this one step further by using AI to generate messages. Don’t think you would get tricked by an AI love note? Think again. McAfee's Modern Love Research Report found that 7 in 10 people failed to distinguish if AI wrote a love letter. These romance scams have previously been limited by the number of conversations and engagements a scammer can have. With AI, a chatbot can have potentially thousands of conversations with victims, all simultaneously.

There are increasing layers to AI romance scams - ChatGPT can write a persuasive love letter for scammers quicker, AI chatbots can maintain multiple, simultaneous conversations with victims, and data aggregation AI tools can allow cyber criminals to identify and target vulnerable individuals.

However, just as AI has become part of the problem, it must also be part of the solution.

The best way to fight AI is with AI guided by the best fraud and compliance professionals and by working together as an industry.

Fighting scams takes teamwork. We can catch more scams before they happen if we understand the types of scams and what to look for.

Ways to detect romance scams

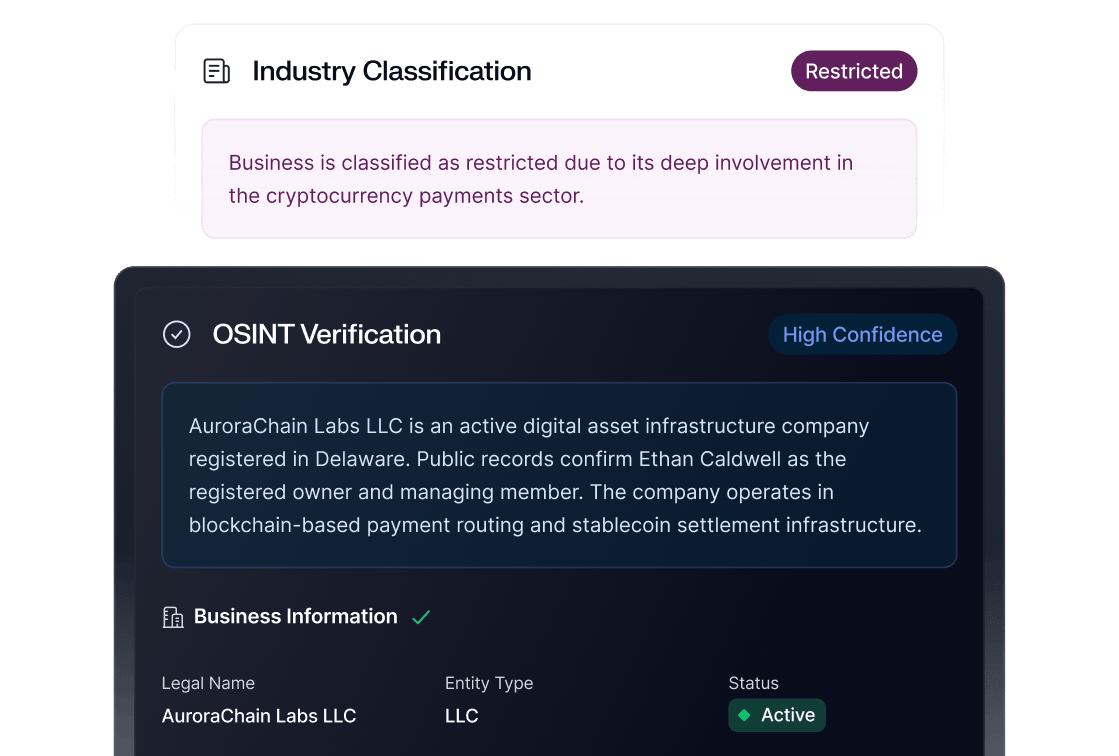

Romance scams are difficult to detect because victims use their own KYC and connect their bank accounts to authorize an ACH transaction. To the Fintech company, it looks like a valid transaction.

As fraudsters use increasingly sophisticated methods to perpetrate scams, companies must stay updated with the latest detection and prevention techniques.

Here are some potential methods for detecting scams generated by AI models:

- User Behavior: One approach is to analyze the message's text or communication for specific fraud indicators. Companies could use natural language processing (NLP) techniques to look for patterns in the language that suggest fraudulent intent. AI has a certain “way” of phrasing things. Detect that, and it could be a scam in progress.

- User Device: Another approach is to analyze the user's device associated with a particular user. This could involve looking for activity patterns that tend to be fraudulent, at account onboarding, funding, or during a payment.

- Transaction Monitoring: This could include monitoring user behavior for anomalies such as sudden spikes in transaction volumes, changes in transaction types, or deviations from established activity patterns.

- Machine learning: Machine learning algorithms can be trained on large datasets of known fraudulent communications or transactions to identify patterns or signatures associated with fraud.

- Collaboration: Collaboration helps identify persistent bad actors. By analyzing the behavior of similar users or accounts, companies can stop repeat offenders.

It's worth noting that fraudsters are constantly evolving their techniques and adapting to new security measures, so companies need to remain vigilant and adapt their fraud detection strategies accordingly. It's also important to be aware of the limitations of any given approach, as fraudsters may be able to find ways to circumvent even the most sophisticated security measures.

The ideal combination: Device, Behavior, ML, Humans and Collaboration.

Sardine combines device and behavior insights with cutting-edge ML models to catch more fraud.

Device, Behavior, and Transactions

When you understand a device and how it is used, you can spot the hidden clues scammers leave behind.

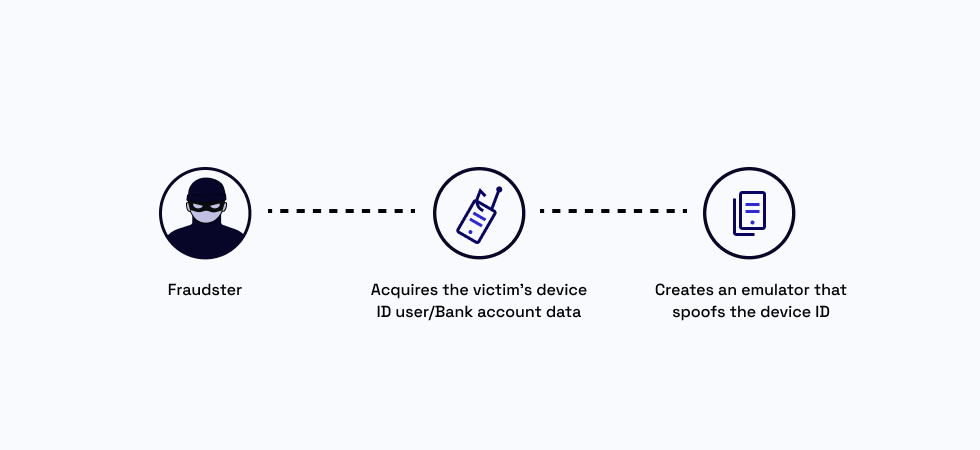

1. Device Signals: Emulators

Fraudsters never want to reveal their own device or IP profile and are likely to use an emulator instead of a real mobile device, or if they want to do this at scale, they will rent out a mobile data center. To hide their IP, they use proxies, VPNs, or TORs.

Our device and behavior intelligence SDK collects telemetry and other low-level signals. which allow us to detect if someone is using a true device vs. a pretend device (emulator) as well as if someone is using a data center (all phones will be in the same orientation).

2. Behavior Signals: Remote desktops

Scammers utilize various remote screen-sharing tools like TeamViewer, AnyDesk, and Citrix in this attack vector. The hardest part about these scams is that the victim is using their own device, their own IP, providing their own eKYC information, and even uploading their documentary KYC and liveness checks.

So everything, from the point of view of KYC, would check out. However, the fintech would be missing the most important part here: the victim’s computer was being remotely controlled at certain moments.

Sardine has built proprietary technology to detect these screen-sharing tools in a privacy-preserving way. We look at changes in mouse movement, screenshots or detect if an active call is in session, indicative of someone coaching the end user.

At Sardine, we are the only provider that can detect that a customer has used remote screen-sharing software. One customer was able to reduce their fraud by 7x and now recommends Sardine to all of their partners and banks because of this unique ability.

3. Transaction Signals: Anomaly Detection

The best way to counter generalized AI is with specialized AI.

Sardine’s Proprietary machine learning has trained to detect anomalies. Sometimes, something looks weird. Historically finding these patterns was left to fraud teams who did manual work and had to churn through mountains of data.

Sardine will flag them automatically, saving time and effort for clients.

Sardine spots anomalies from a baseline, and can automatically adjust the response we give clients over time. Our models are constantly being retrained and back tested. As scams evolve, Sardine is evolving too.

A scam won’t show in a transaction until the payment has already authorized. The device and user behavior allows Sardine spot signals for risk before a transaction happens.

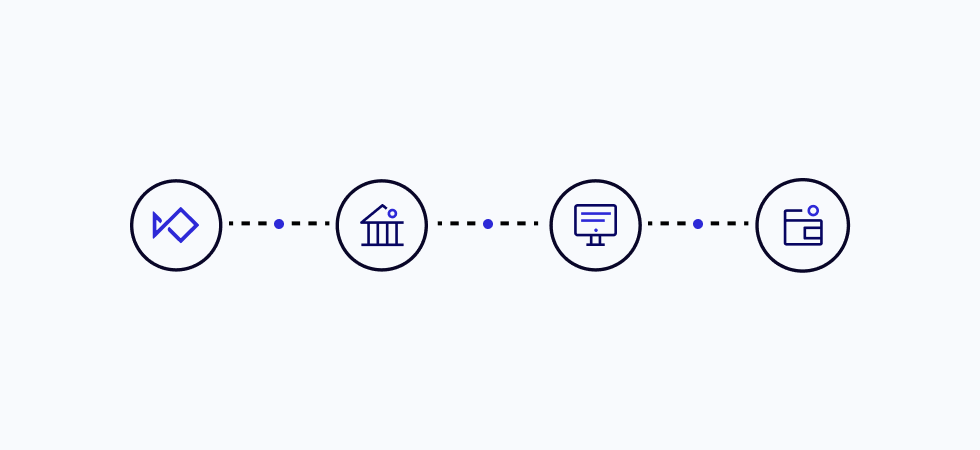

4. Collaboration

Fraud and compliance professionals will often share war stories and lessons learned, but its much harder to share data.

Sardine will soon launch a utility for data sharing among banks, fintech companies and crypto wallets to catch more fraud.

We work better together.

If you want to catch more fraud, contact us.