In Part 1 of our false positives blog series, we accepted the reality that your system isn't built to report its own mistakes. We discussed using triangulation to work around that and generate a usable picture of your false positives.

Now comes the next problem.

Quantifying false positives is merely an observation. It is not a plan.

To reduce false positives in a meaningful way, you have to go one level deeper and ask a different set of questions:

- Where do they come from?

- Which are driven by partners?

- Which are actually data quality problems masquerading as fraud risk?

- And most importantly: where should we start?

That's what this second part of the series is about: breaking down your false positives into buckets that you can prioritize and act on.

How do you do that? It comes down to a 7-step process.

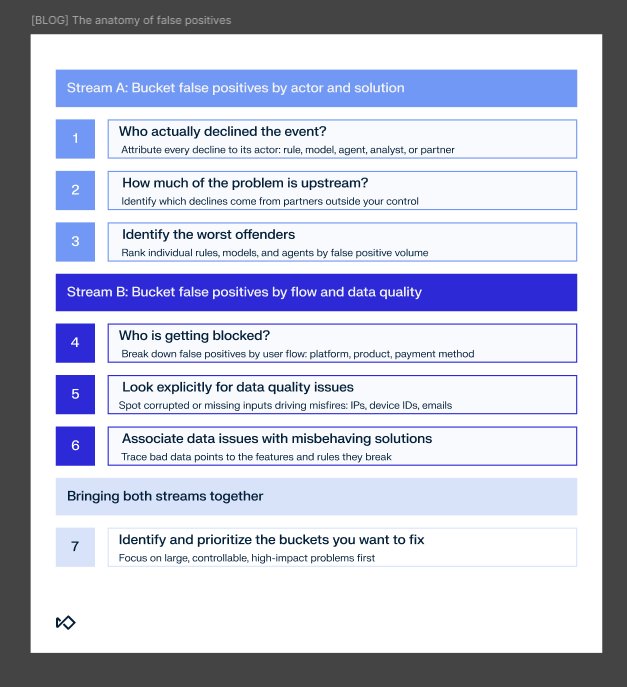

Stream A: Bucket false positives by actor and solution

Step 1: Who actually declined the event?

The first and most important step is to attach every false positive to the actor that made the decision.

When a transaction is declined or an onboarding attempt is blocked, someone or something said "no."

That someone might be:

- A rule in your system

- A machine learning model threshold

- An AI agent

- A human analyst in manual review

- A third-party partner: a bank, issuer, acquirer, or fraud vendor

Without this categorization, you will default to optimizing the things you can see, usually your rules and your models.

That's how teams spend months fine-tuning rules, only to later discover that most of their declines were coming from an issuer they never spoke to.

The first task is essentially accounting: for every decline, log which actor made the decision.

Sometimes it will be a single rule. Sometimes it will be a decision based on a certain model score threshold. Sometimes it will be a manual override. Sometimes it will be a binary response coming back from a payment partner.

You don't have to do this perfectly on day one; even a rough breakdown makes an enormous difference.

Step 2: How much of the problem is upstream?

Once you have that basic map, you can ask a question most people skip: how much of this is even under your control?

This is particularly important in card payments, where a single card transaction can pass through half a dozen hands before an issuer finally says yes or no.

Between the cardholder and the issuing bank, you often have:

- The merchant or platform itself

- A payment service provider

- An acquirer

- An acquiring processor

- A card network

- An issuer processor

- And finally, the issuing bank

And that's without counting the third-party fraud vendors some of these actors plug into their own stacks.

Each of these actors can decline a transaction. Each has its own risk logic. Each contributes its own false positives.

If 60% of your false positives are driven by upstream partners, then your maximum sphere of influence is capped at 40%.

That doesn't mean you should give up and walk away. It does mean you should rethink your goals, how you report them internally, and where you spend your energy.

You cannot tune rules you don't own. You cannot adjust models you never see. You cannot re-train an issuer's risk engine.

You can, however, quantify the impact, make it visible, and make sure everyone in the organization understands where the limits of your influence actually are. That step alone saves a lot of frustration later.

Strategically, if you're unhappy with a partner's performance and believe it's sub-standard, you can always work to replace them. But often you'll still have actors you don't control impacting your false positives.

Step 3: Identifying the worst offenders

Once you have your decisions sorted into high-level buckets, it's time to go one level deeper. Take every individual decision vehicle you have and detail them individually: every rule, workflow, investigator, and AI agent. This is where you want to get granular.

Realistically, perfect execution is difficult and time-consuming.

Focus first on the solutions that drive most of your declines. Even if a rule is relatively accurate, high volume usually means it is a primary source of false positives.

Tally them separately and identify the ones responsible for the most false positives in absolute terms. These are your "quick wins" because you'll have enough data to work with.

At the same time, your gut might tell you that some offenders are flying below radar: legacy rules, outdated policies, or solutions that were never properly validated.

If you suspect a low-volume rule is causing issues, use manual review to validate your hunch. You don't need a massive dataset to identify a broken policy.

Stream B: Bucket false positives by flow and data quality

Step 4: Who is getting blocked?

We now know what is blocking your users, but we still need to know where those users are coming from.

Even within the part of the stack you control, false positives are rarely evenly distributed. They tend to cluster around specific flows, such as:

- Mobile vs. web

- iOS vs. Android

- Product

- Payment method

- New vs. established users

If you only look at the actor that declined the event, you'll see a rule or a model misbehaving. But if you look at which flow the user went through, you might find a deeper issue: a specific flow that produces corrupted or missing data, causing your entire stack to misfire.

Consider an integration bug in your mobile SDK that sends incorrect IP data for iOS signups. You don't see that bug at first. What you see is that several geo-based rules suddenly have higher false positive rates on that platform. A model that uses IP-based features also seems to drift. Analysts complain that events coming from mobile look weird.

If you only look at the rule level, you'll waste weeks recalibrating models. By breaking it down by flow, you quickly realize the logic is fine on the web, and the problem is isolated to the iOS registration path.

It isn't always a data bug. It might be that a specific flow concentrates many good users who behave differently than your general population, which on its own can drive false positives up.

So once you've bucketed your false positives by actor, do the same by user journey:

- Which product surface? (e.g., app vs. desktop)

- Which platform? (e.g., iOS vs. Android)

- Which payment method? (e.g., Apple Pay vs. credit card)

- Which geography? (e.g., Tier 1 vs. emerging markets)

- Which specific funnel? (e.g., guest checkout vs. logged-in)

Patterns will emerge very quickly.

Step 5: Look explicitly for data quality issues

The moment you start seeing patterns by flow, you will almost always run into the same culprit: data quality.

Sometimes the underlying fraud logic is actually fine. The rules are reasonable. The model is well-calibrated. The analysts are experienced. And yet, on a particular slice of traffic, your performance tanks.

That's often because the system is operating on corrupted or missing inputs:

- Default IP addresses where the real IP failed to capture

- Placeholder emails or garbage values

- Device IDs that reset to null on some OS versions

- Payment metadata that is never passed through for a certain method

- Timeouts or integration errors with third-party intelligence sources

A good method for quickly uncovering such issues is to group data points by their value and look for values that have a suspiciously high count.

Step 6: Associate data issues with misbehaving solutions

Once you identify data quality issues, you have some detective work to do.

You need to trace each corrupted data point to the features it influences (e.g., email to email velocity), then link those features to your misbehaving rules or models.

For most organizations this exercise can prove incredibly hard, but you have a shortcut: your list of top offender solutions from Step 3.

Cross-reference your corrupted data points with the inputs used by your worst-performing rules. You might find a hidden connection without spending days analyzing data schemas.

By associating data issues with solutions, you'll be able to complete the last link in the chain: attributing value to each of these issues, just as you've done with your worst offenders.

Now it's time to bring it all together.

Bringing both streams together

Step 7: Identify and prioritize the buckets you want to fix

With this map in place, prioritization becomes much more straightforward.

You start with buckets that are:

- Large enough to matter

- Under your control

- Not obviously caused by upstream or data-quality issues that belong elsewhere

In many organizations, this means:

- A small number of high-volume, high-impact rules

- One or two model thresholds that were set conservatively

- Specific case management policies that encourage over-blocking

- A couple of flows where your system produces data issues

These are exactly the areas we'll focus on in Part 3, where we get into the mechanics of pruning misbehaving rules, adjusting thresholds, and redesigning parts of the decision logic to be less trigger-happy.

That work is only effective because you've done the root cause analysis first and know how to invest smartly.

From map to roadmap

By the end of this process, you should have three assets:

- A map of the false positive landscape broken down by actor, flow, and data health

- A clear understanding of which parts of the problem you own and which are inherited from partners or architectural constraints

- A shortlist of high-impact, fixable buckets where you can start making changes with a reasonable expectation of moving the needle

This is what turns "false positives" from an amorphous complaint into a concrete workstream.

In Part 3 of this series, we'll zoom into those high-impact buckets and discuss the specific tactics for resolution:

- How to decide whether a misbehaving rule should be turned off, softened, or sent to manual review instead of auto-decline

- How to use the "mirror image" of your fraud-detection process to design exclusions that remove false positives without opening the door for more fraud

- How to deal with data quality issues that are degrading your fraud prevention system's performance

Finally, in Part 4, we'll build a global safety net: high-level heuristics that sit on top of your entire system and catch good users before any underlying solution (rule, model, agent, or human) can mistakenly block them.

For now, if you've gone from "we have false positives" to "we know where most of them come from and which parts we can actually fix," you're already well ahead of most teams.

That's a good place to be.