Agentic banking and the future of AI-driven financial control

What’s up fraud fighters, and welcome to Fraud Forward!

This episode picks right back up in one of the most important places this conversation could go: what happens after we move past the shiny AI headlines and start talking about control, governance, disputes, and real accountability inside financial services.

Because that is the real conversation around agentic banking.

Not just whether AI in banking is possible.

Not just whether customers will use it.

But whether banks are ready to govern it responsibly when AI agents in banking start influencing payments, authorization, disputes, and customer trust.

What I really appreciated about this part of the conversation is that we did not stay at the hype layer. We got into what agentic payments actually require if institutions want to use them safely. We talked about AI governance in banking, AI transaction monitoring, banking compliance and AI, and what it means to preserve trust when AI banking transactions start happening closer to the customer.

And that matters.

Because if agentic banking is going to become part of the future of financial services AI, then we have to build it with intention. We have to build it with controls. And we have to make sure trust infrastructure in banking grows with the technology instead of getting left behind by it.

What you’ll hear in this episode

- How agentic banking can solve real customer friction in ways traditional digital banking tools often cannot

- Why AI agents in banking create new questions around governance, accountability, and control

- How AI payment authorization, OTP checks, and layered safeguards can help make AI banking transactions safer

- Why banking compliance and AI need stronger context sharing, better auditability, and clearer ownership

- How AI transaction monitoring may need to evolve from reviewing transactions alone to reviewing prompts, instructions, and agent-led behavior

- Why banking dispute resolution becomes more complex when a customer authorizes an AI agent instead of initiating every action directly

- How community bank innovation and regional bank innovation can benefit from agentic banking without giving up control

You should listen to this episode if

- You work in fraud, compliance, payments, risk, digital banking, or bank operations

- You are trying to understand how agentic banking may affect governance and customer trust

- You are evaluating AI in banking beyond chatbot functionality

- You care about banking fraud prevention, AI fraud prevention, and safer banking with AI

- You want a more practical conversation about how AI-powered banking actually works inside real institutions

- You are asking how banks can modernize customer experience without creating new gaps in control

If you liked this episode, be sure to subscribe and review the podcast on iTunes, Spotify, YouTube, or wherever you listen to podcasts.

Episode notes & key takeaways

Before we double click on the notes, I just want to say that my marketing team told me I need to structure these notes a certain way in order for people to find my podcast. The below is a bit of that.

Agentic banking gets real when it solves real customer problems.

One thing I wanted to do in this conversation was make agentic banking feel practical.

Not theoretical.

Not futuristic just for the sake of sounding innovative.

Practical.

Because that is where a lot of conversations around AI in banking start to lose people. They stay too abstract. They talk about AI-powered banking like it is just a concept, when what people really want to know is simple: what would this actually do for me?

That is why I shared my own example around paying my cousin for helping with my kids. It is inconsistent. It is easy to forget. It does not fit neatly into a standard recurring payment workflow. And that is exactly why agentic banking matters. It creates room for a more adaptive customer experience. One that responds to intent instead of forcing every need into a fixed product feature.

That is a real shift in digital banking AI.

Governance has to move with the transaction

Another theme we kept coming back to is that AI governance in banking cannot sit off to the side.

It has to move with the transaction.

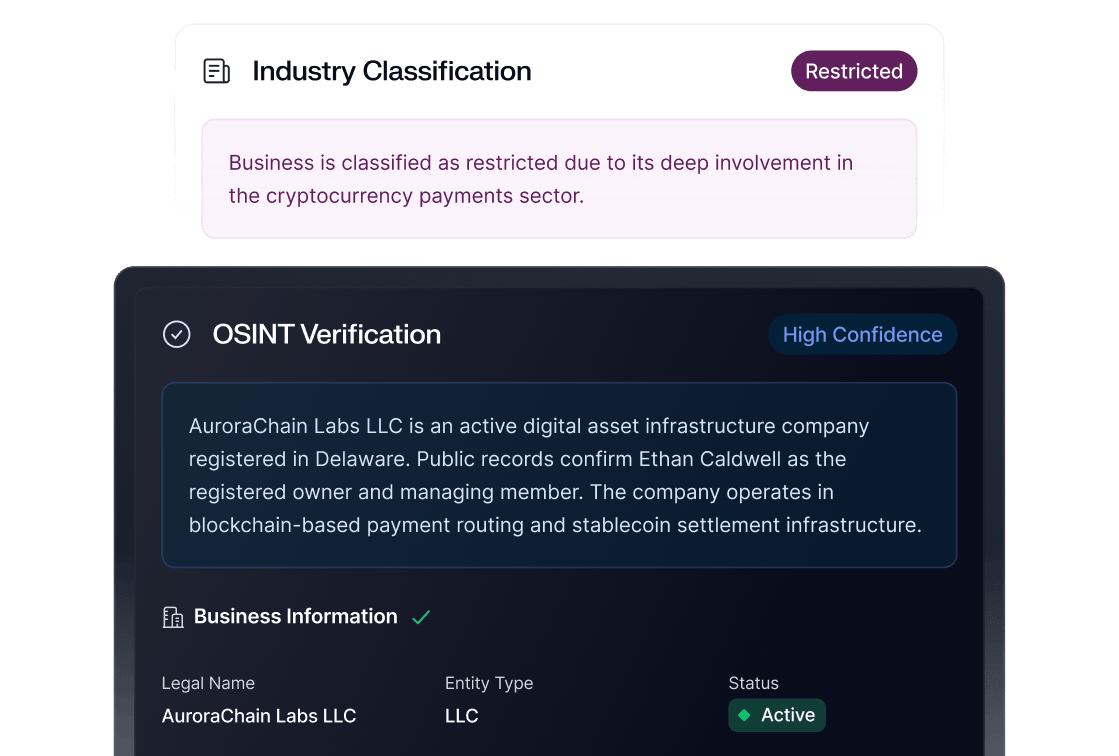

Tyllen made a point that I think is really important here. Banks do not need to throw out their entire governance framework just because AI agents in banking are entering the picture. The core banking provider still matters. Existing policies still matter. Fraud controls still matter. But now institutions also need better context around why a transaction happened, what the agent was asked to do, and whether the action matched the bank’s rules.

That is where this gets interesting.

Because if agentic payments are going to scale, then banks need more than a result. They need visibility into the logic behind it. That is what makes AI banking transactions more governable, more defensible, and honestly more trustworthy.

Trust comes from control, not from hype

This was one of the strongest takeaways for me.

Trust does not come from saying the technology is smart.

Trust comes from control.

That means OTP verification. That means layered screening. That means keeping a clear record of who initiated what, which session was user-led versus agent-led, and what safeguards were triggered before money moved. That also means knowing when the bank can step in, shut something down, or separate an AI agent’s activity from the customer’s direct access.

If we want safer banking with AI, we cannot treat trust like a branding exercise. It has to be operational. It has to be built into the product, the authorization layer, and the decision trail.

Banking compliance and AI need better context

Lisa brought a really important perspective to this conversation because she kept grounding everything back in the systems compliance teams already understand.

That matters.

Because one of the biggest mistakes people make when they talk about financial services AI is acting like this is a completely new universe. It is not. It is a new interaction layer. A new data structure. A new operational challenge. But the core need is still the same: institutions need enough information to understand what happened, who initiated it, and whether the transaction was authorized and compliant.

That is why context sharing is so important.

If banking compliance and AI are going to work together, institutions need to preserve the record. The prompt. The instruction. The session type. The authorization trail. Without that, fraud teams, risk teams, and dispute teams are being asked to make decisions in the dark.

AI transaction monitoring is going to evolve

This conversation also pushed into a really important future-state question: what does AI transaction monitoring actually look like when the action starts with a prompt?

Because that changes the review process.

It is one thing to review a suspicious transaction after it happens. It is another thing to understand the natural language, intent, and agent behavior that led to it. Tyllen talked about how engineering is already shifting in this direction by reviewing prompt logic, not just final code output. That same mindset may become more important in banking fraud prevention too.

Not because the goal changes.

But because the evidence does.

That means AI fraud prevention may increasingly depend on understanding what the agent was asked to do, how it interpreted the request, and whether the final action matched the original intent.

Disputes are going to get harder before they get easier

We also spent time on banking dispute resolution, and honestly, this is one of the places where agentic banking forces the industry to get very real very fast.

Because if a customer says, “I didn’t authorize that,” but the customer did authorize the AI agent, then what happens next?

That is not a small question.

That is a core operational question.

Lisa did a great job breaking down how dispute resolution may need to evolve when authorization is delegated, delayed, or partially automated. And what stood out to me is that the answer keeps coming back to the same thing: context. If both parties do not have enough information to understand what the customer intended, what the agent executed, and what controls were in place, then the dispute process becomes much harder to resolve fairly.

That is why standardization matters so much here. Not just for efficiency, but for accountability.

Community banks should not sit this out

One thing I do not want community banks or regional institutions to hear in this conversation is that agentic banking is only for the biggest players.

It is not.

In a lot of ways, this may be one of the biggest opportunities for community bank innovation and regional bank innovation if institutions approach it with the right controls in place. Because the real differentiator is not going to be who talks about AI the most. It is going to be who builds the safest, smartest, most trusted experience around it.

And that is still a people business.

That is still a relationship business.

Which means this conversation belongs in community banking just as much as anywhere else.

AI is coming to banking

What stayed with me most in this episode is that agentic banking is not really a conversation about whether AI is coming to banking.

It already is.

The real question is whether banks are going to build it responsibly.

Whether they are going to embed trust directly into the system.

Whether they are going to treat governance, fraud prevention, authorization, and compliance like core design requirements instead of cleanup work after launch.

Because fraud is still human-driven. Pressure is still real. And when money moves, especially in AI banking transactions, the consequences are never just technical. They hit trust. They hit stability. They hit real people.

So no, I do not think this is something institutions should fear.

But I do think it is something they need to take seriously.

Agentic banking can create a smarter, more adaptive experience for customers. It can strengthen banking fraud prevention. It can support better AI transaction monitoring. It can help institutions build safer systems.