Agentic Banking: Designing the Trust Engine with Accountability for the AI Era

What's up fraud fighters, and welcome to Fraud Forward!

Today we’re talking about AI transaction monitoring and why it matters so much as agentic banking starts moving from concept to reality.

Because once AI agents in banking move beyond simple chat and into actions, payments, and customer instructions, the risk conversation changes fast.

This is no longer just about whether AI in banking feels helpful.

It's about whether institutions can actually monitor intent, trace authorization, support banking dispute resolution, and reduce fraud risk without losing the trust they’ve worked so hard to build.

In this episode, I sat down with Tyllen Bicakcic to break down what agentic banking actually means, why it is gaining traction, and why AI transaction monitoring is going to become one of the most important control layers in this entire conversation.

What I really appreciated about this discussion is that we did not stay at the hype level.

We talked about real use cases. Real banking friction. Real fraud questions. And the very real challenge of how banks can build safer banking with AI without creating new blind spots around governance, payment risk monitoring, and customer trust.

And the reality is this: If AI agents are going to influence transactions, then transaction monitoring in banking has to evolve right alongside them.

What you'll hear in this episode

- What agentic banking actually means and how it differs from traditional AI in banking

- Why AI agents in banking create new questions around intent, authorization, and accountability

- How AI transaction monitoring can strengthen banking fraud prevention and AI fraud prevention

- Why trust infrastructure in banking matters as much as innovation

- How banks can think about AI governance in banking without rebuilding everything from scratch

- Why banking compliance and AI must move together as agentic payments become more practical

- How banking dispute resolution changes when an AI agent initiates or influences a transaction

- Where real-time fraud monitoring, payment risk monitoring, and AI risk signals fit into the next phase of digital banking AI

You should listen to this episode if

- You lead fraud, risk, compliance, BSA, AML, or payments programs at a bank or credit union

- Your team is evaluating agentic banking or AI agents in banking

- You are thinking about how AI transaction monitoring should work in real financial services AI environments

- You want a better understanding of AI payment authorization and dispute handling in an AI-driven world

- You care about building safer banking with AI without giving up control, visibility, or trust

If you liked this episode, be sure to subscribe and review the podcast on iTunes, Spotify, YouTube, or wherever you listen to podcasts.

Episode notes & key takeaways

Before we double click on the notes, I just want to say that my marketing team told me I need to structure these notes a certain way in order for people to find my podcast. The below is a bit of that.

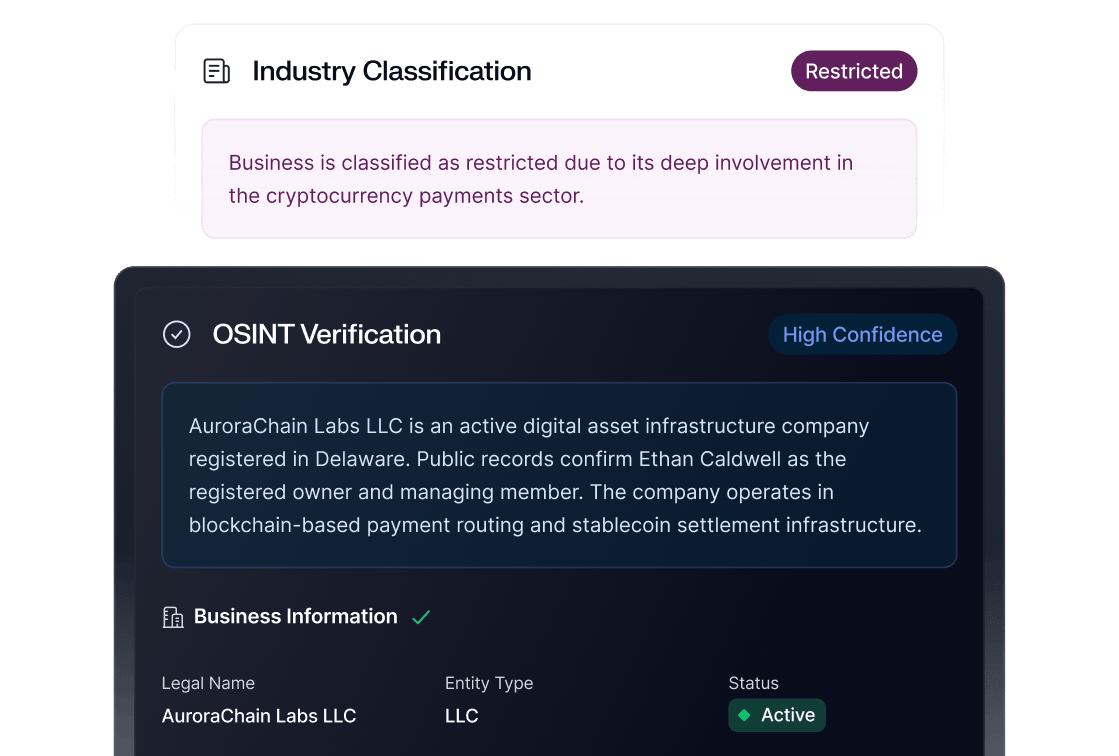

AI transaction monitoring starts with intent

One of the biggest themes in this conversation is that AI transaction monitoring cannot just focus on the transaction itself.

It has to focus on intent.

That was really the thread running through this entire discussion. Tyllen kept coming back to the idea that traditional banking systems usually see the final action, but not always the reason behind it. And that changes when agentic banking enters the picture.

If a customer asks an AI agent to help them save for something, move money, or set up a recurring action, the monitoring opportunity becomes much richer. Institutions are no longer limited to seeing the final transfer or payment event. They can start to understand why it happened, what was requested, and how the AI interpreted that request.

That matters because AI transaction monitoring becomes much stronger when it includes context, not just activity.

Agentic banking changes the monitoring conversation

Another thing that stood out to me is that agentic banking is not just another chatbot layer inside digital banking AI.

It is a shift toward action.

And once AI agents in banking start taking action on behalf of customers, whether that is setting up savings behavior, triggering reminders, or influencing payment flows, monitoring has to move with them.

That does not mean banks need to throw away their current programs. In fact, one of the strongest points in this episode is that much of the existing banking risk management framework still matters. Existing fraud controls still matter. Existing compliance programs still matter.

What changes is the depth of information available.

That is where AI governance in banking starts to feel much more practical. You are not replacing governance. You are giving it more context to work with.

Trust infrastructure matters more than hype

We also spent a lot of time talking about trust.

And I think that matters because too many conversations around AI in banking still get framed like a product demo instead of a risk reality.

Tyllen made a strong point that trust already exists at banks. Customers may be far more willing to engage with AI-powered banking through their financial institution than through a general-purpose tool. But that trust only holds if the institution can control the experience.

That is where trust infrastructure in banking becomes essential.

Not vague trust.

Not assumed trust.

Actual control.

Can the bank verify the action?

Can the bank separate user activity from agent activity?

Can the bank see what was asked, what was allowed, and what was executed?

Can the bank stop bad behavior without breaking the whole experience?

That is the real work.

And it is exactly why AI transaction monitoring matters.

Fraud prevention gets stronger when context gets better

One of my favorite parts of this episode was the discussion around auditing the thinking.

Because if you work in fraud detection in banking, you already know how often teams end up asking the same question after something slips through.

What were they thinking?

What this episode suggests is that AI fraud prevention may actually give us a better way to answer that question.

If an AI agent is involved in the flow, and if the system is built well, then institutions may be able to review the logic behind the action in ways they simply cannot with traditional human-led interactions. That includes understanding prompts, decisions, steps taken, and whether a transaction aligned with policy and customer input.

That does not eliminate fraud risk.

But it does create a better foundation for banking fraud prevention and real-time fraud monitoring.

And in this environment, more context is not a nice-to-have.

It is a control advantage.

Compliance does not disappear, it adapts

Lisa added a perspective here that I really appreciated because she kept grounding the conversation in operational reality.

She made the point that this is not an entirely new compliance universe. It is a new channel, a new interaction layer, and a new type of data environment. And that framing is important.

Banking compliance and AI should not be treated like two separate worlds.

The real question is how institutions adapt existing processes to support agentic payments, new monitoring inputs, and new forms of authorization data. That includes how teams think about anomaly detection, user sessions versus agent sessions, and what kinds of records need to be stored when an AI system influences a transaction.

That is why AI governance in banking has to be practical.

Not theoretical.

Practical.

Disputes and authorization will define the next phase

Even in this first part of the conversation, you can feel where this is heading.

If an AI agent helps initiate or shape a transaction, then AI payment authorization and banking dispute resolution are going to become much more important.

Because the question is no longer just whether a customer clicked a button.

The question becomes:

What did they instruct?

What did the AI do?

What controls were in place?

And does the final action actually match the original intent?

That is where AI transaction monitoring for agentic banking risk becomes such a valuable framing. Monitoring is not just about fraud scoring after the fact. It is about creating the visibility institutions will need when disputes happen, when customers push back, or when teams need to prove what happened and why.

The future is not less monitoring, it is smarter monitoring

One thing I kept coming back to while recording this episode is that the future of financial services AI is not going to reward institutions that move fastest without structure.

It is going to reward institutions that move intentionally.

The banks that win here will not be the ones that simply deploy AI agents in banking because the market says they should.

They will be the ones that can pair innovation with AI transaction monitoring, payment risk monitoring, banking compliance, and governance strong enough to support the experience.

That is the real opportunity in front of us.

Not just building something new.Building something trustworthy.