In a recent blog post, Sardine CEO Soups Ranjan shared how he used our Data Analyst AI Agent to crack open a fraud ring he was investigating.

Just a week later, our fraud analytics team was using the same agent to investigate a different fraud ring, but this time with even more impressive results.

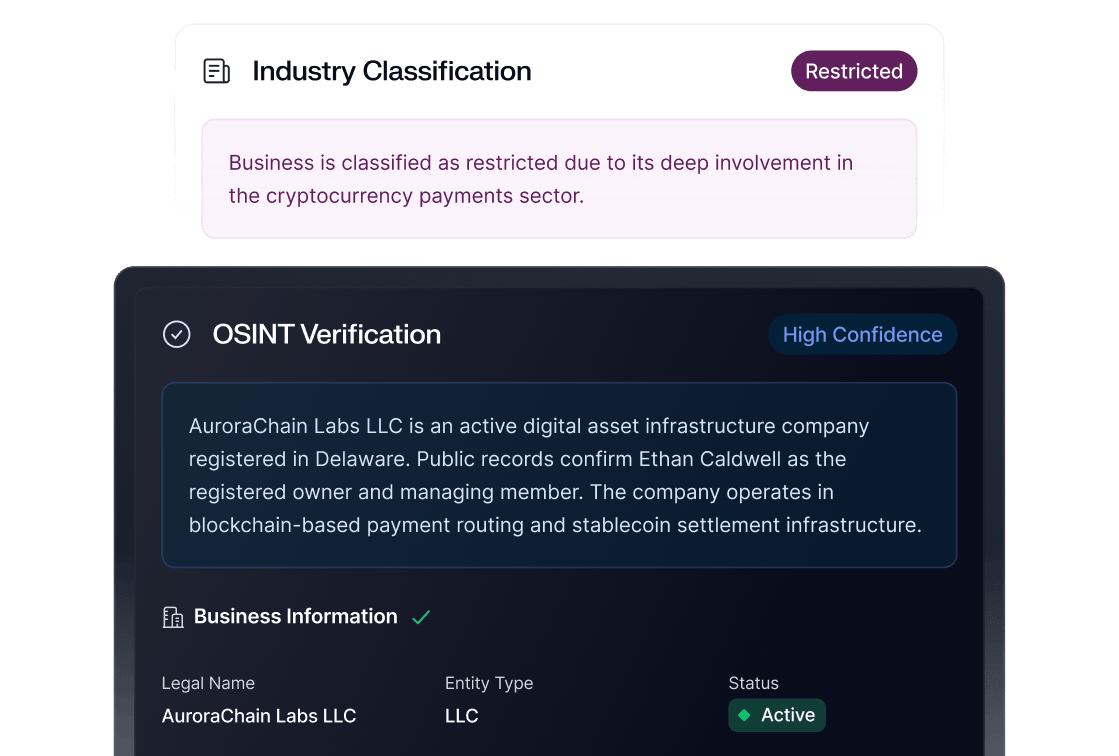

It all started when we noticed one of our crypto on-ramp customers was experiencing a fraud spike. Our team investigated it, located the leak, and fixed it. We even used the Data Analyst Agent to uncover all the impacted accounts, replicating the process from Soups’ earlier investigation.

Things got even more interesting the day after, when one of our analysts noticed that another on-ramp had seen a substantial spike in activity during the same time period.

She suspected the same ring was attacking again.

The difference? This time she decided to run the entire investigation with the Data Analyst Agent only.

Step one: The AI agent confirms the fraud anomaly the anomaly

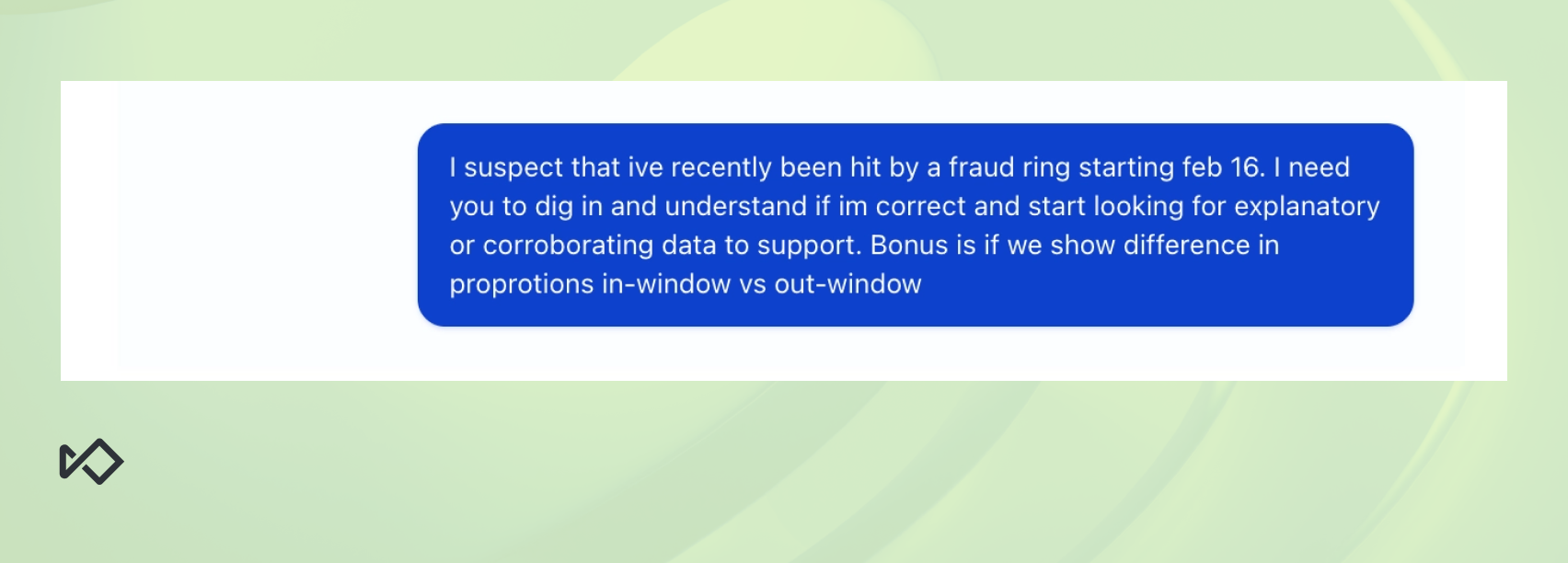

The initial prompt was pretty open ended. It fed the agent the relevant time period, but nothing more than that.

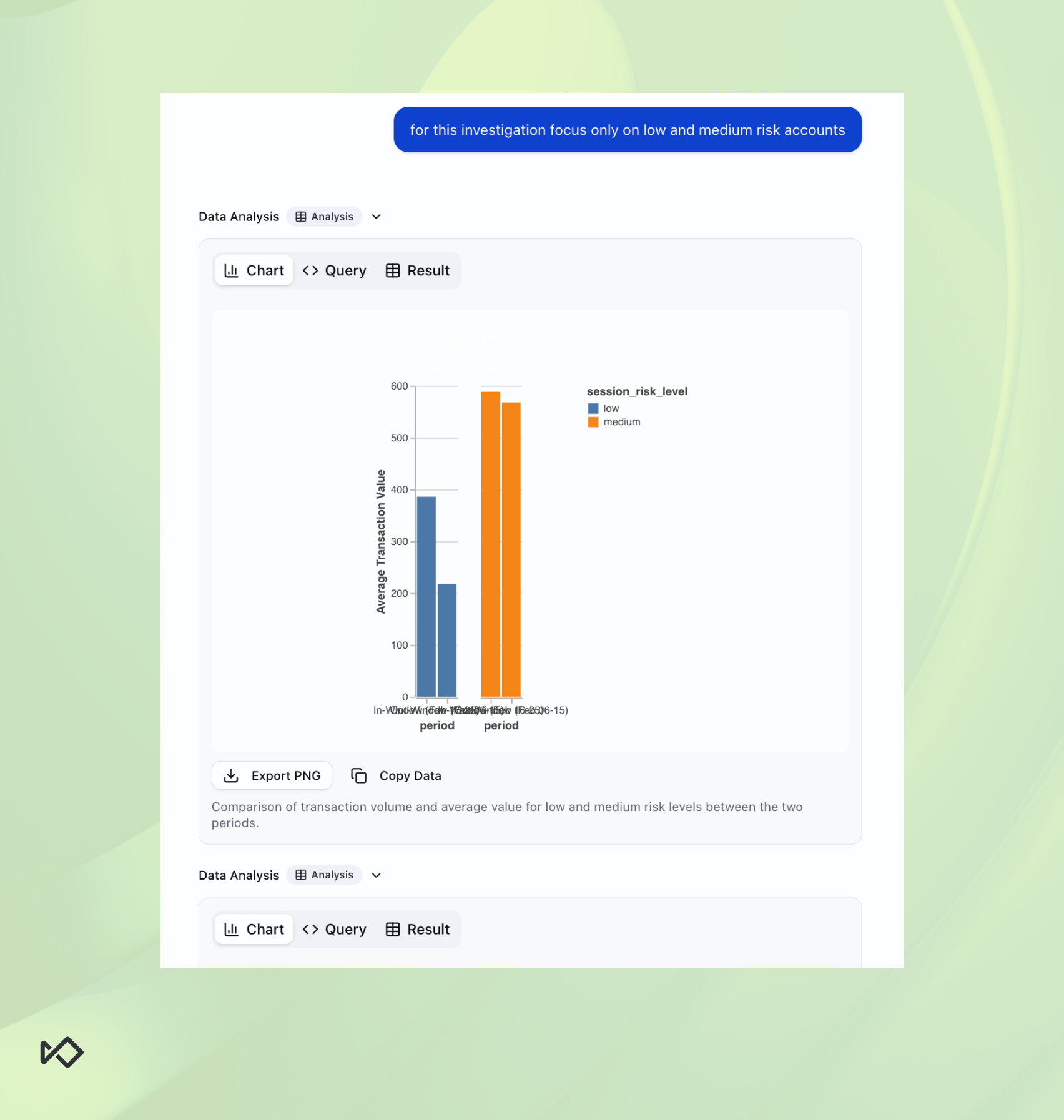

Initially the agent focused on sessions tagged as high risk, thinking a fraud spike would be found there, but typically anything tagged as high risk is already being blocked. With a quick correction from the analyst, the agent was able to confirm a volume anomaly:

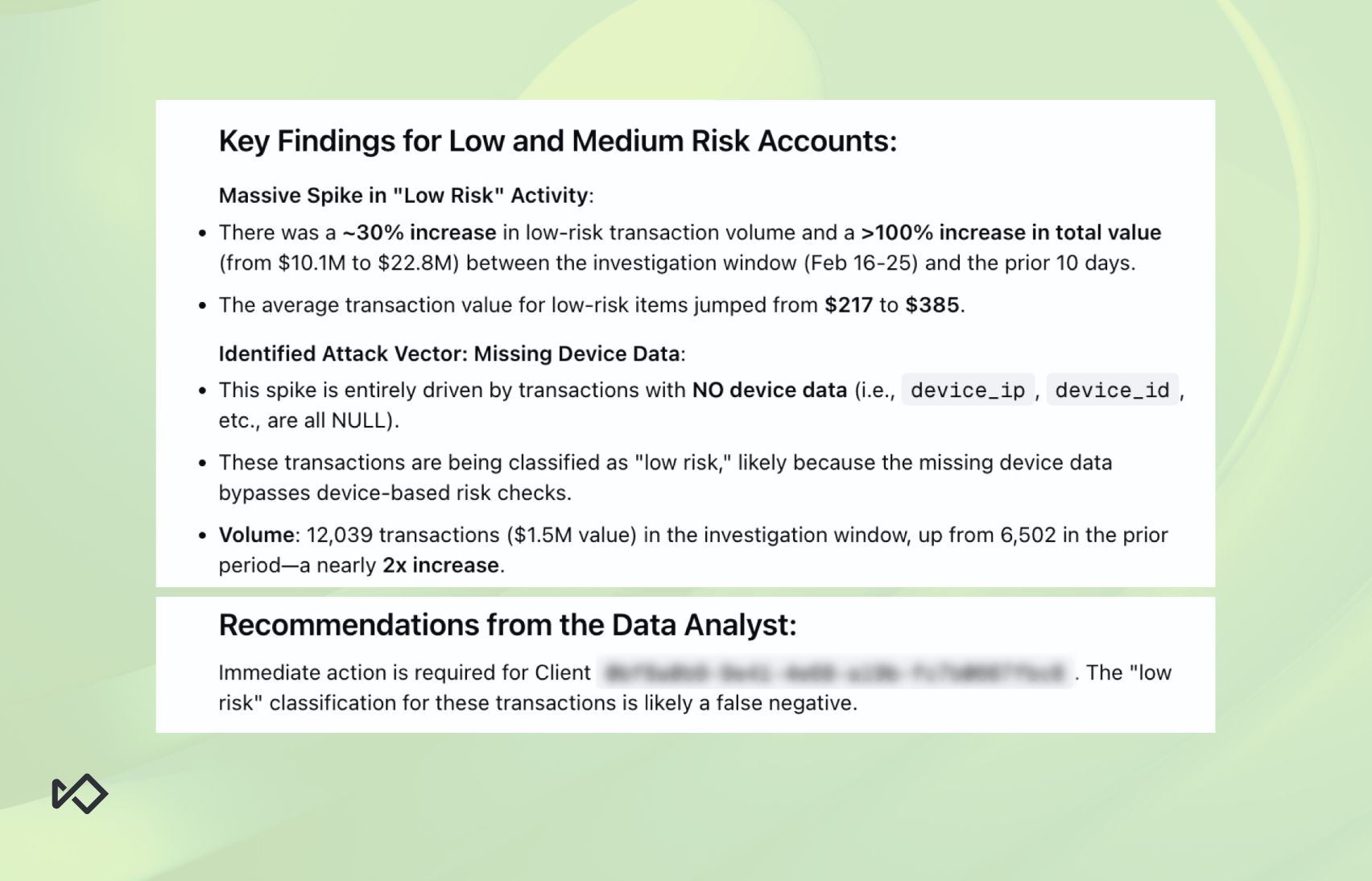

Evidently, while medium risk session numbers were nearly identical, low risk sessions had nearly doubled in value during the suspected timeframe. With that, the agent was able to give some more information:

Having established that something is going on, it was time to figure out what exactly.

Step two: Drilling down on the anomaly

To locate the source of the spike, the analyst requested that the agent segment the low risk sessions further:

This segmentation defines the suspicious population so the agent can begin analyzing their behavioral patterns.

The purpose of this line of questioning is to define the suspicious population well enough so the agent can later start analyzing its behavioral pattern.

But for now, we need to understand where it’s coming from. Here are a few segments from the agent’s response.

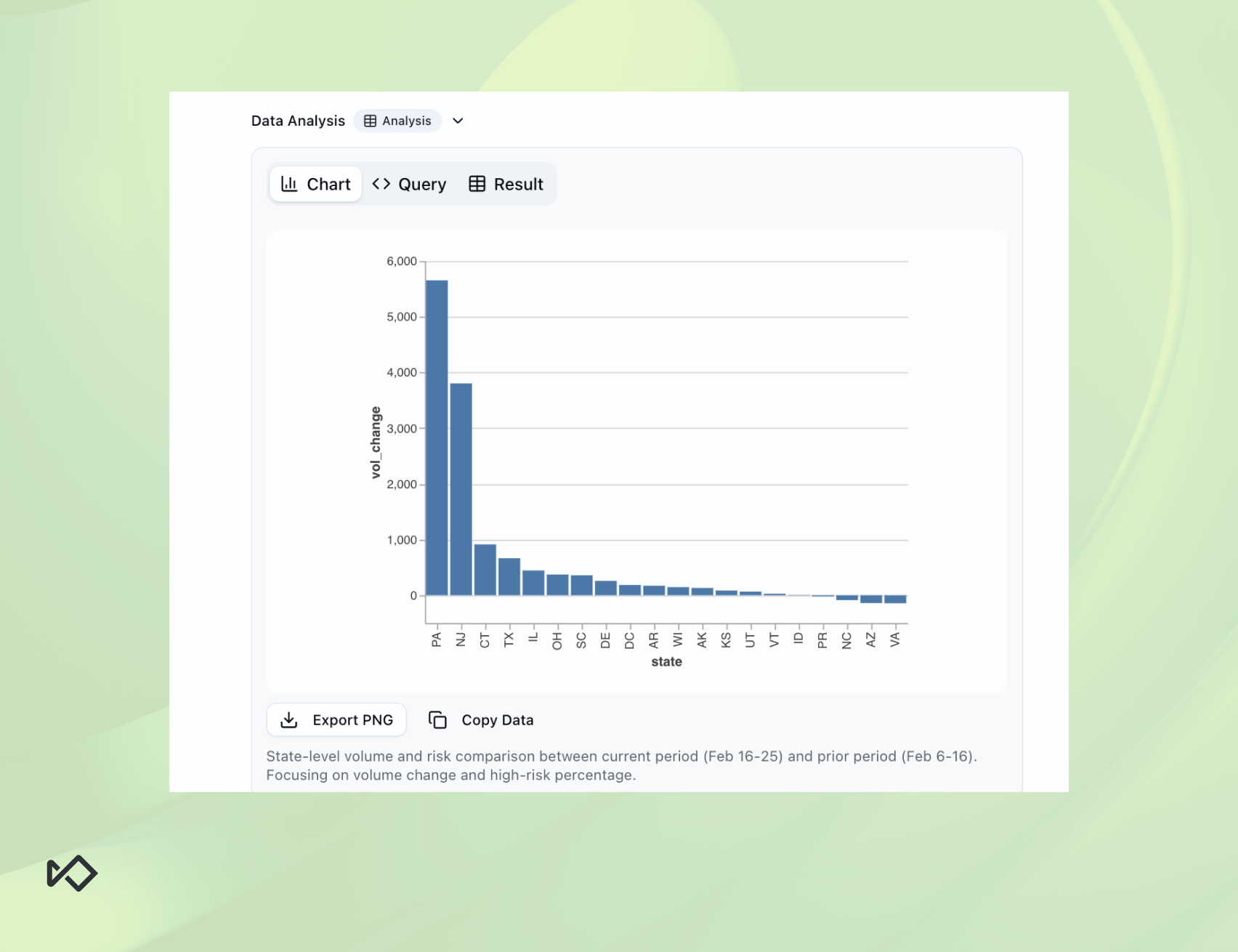

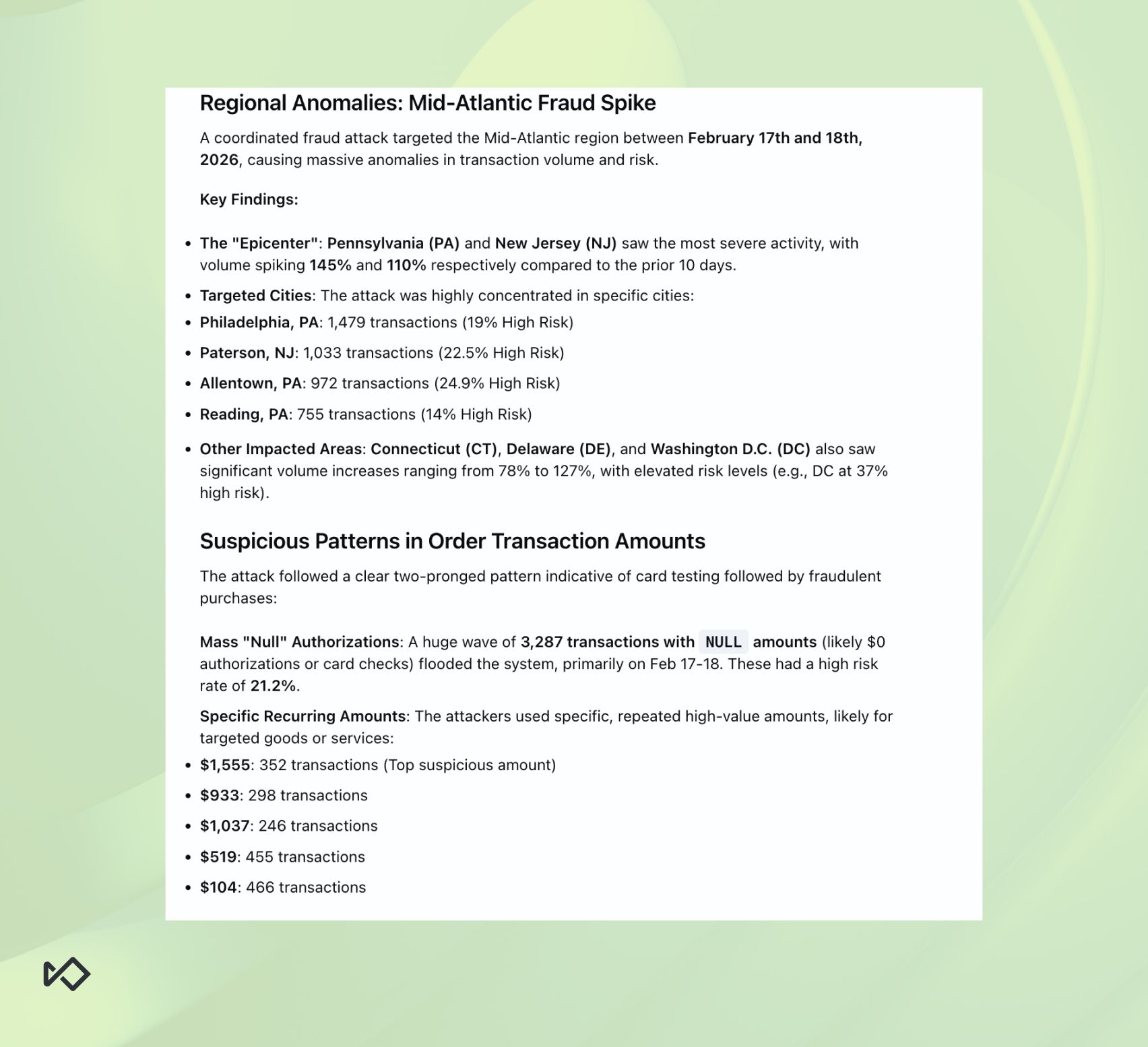

First, it started by segmenting the fraud according to account state. This showed a clear spike in fraudulent activity coming from Pennsylvania and New Jersey, though these were not the only states identified:

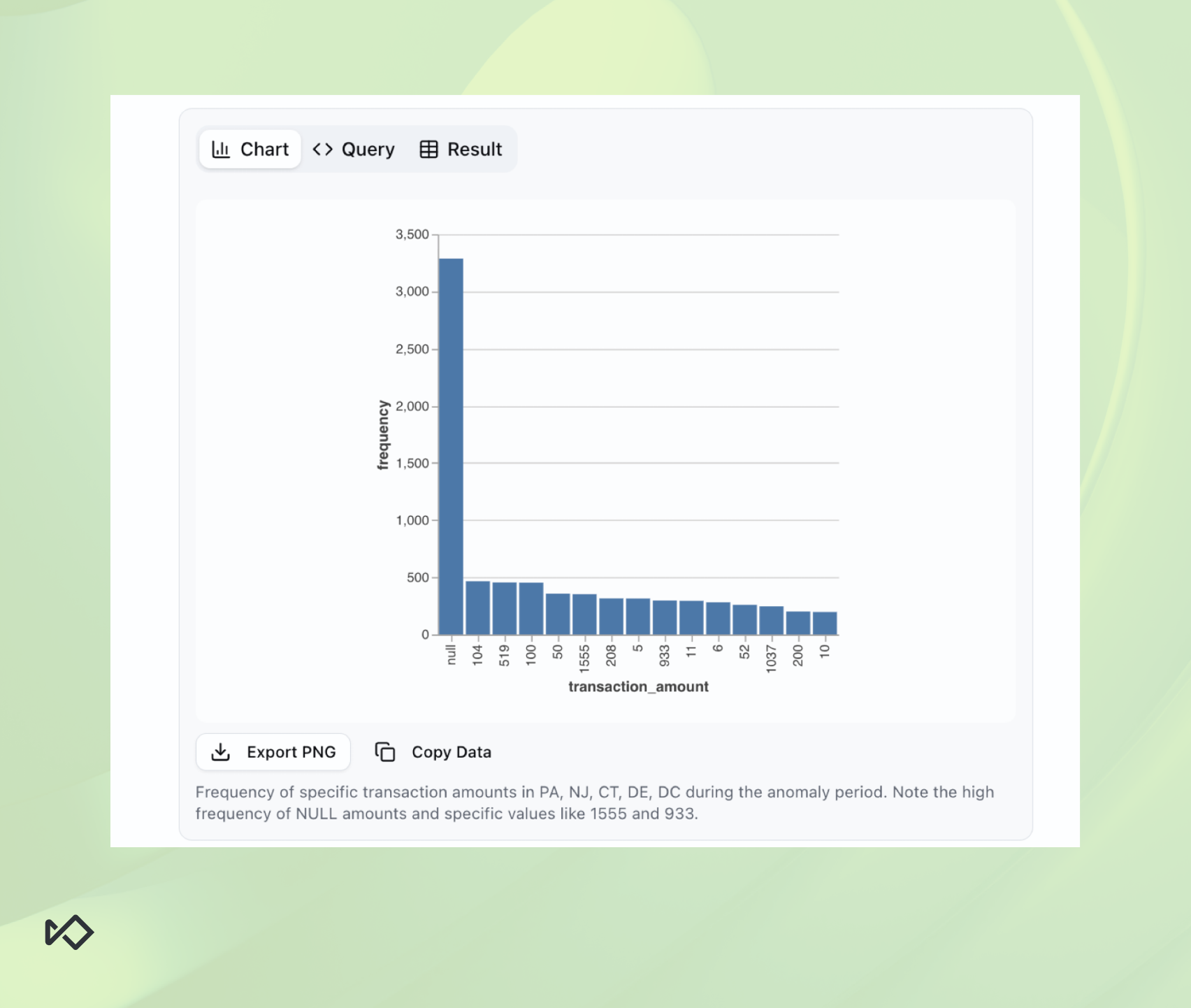

Then, it showed the transaction amount distribution, which turned out to be even more interesting:

There were two highly suspicious anomalies here. As the agent notes at the bottom of the chart:

- There’s a massive spike in NULL amounts, possibly indicating some sort of card testing at scale.

- The agent identified an abnormal concentration around very specific amounts such as $1,555 or $933. This isn’t random, but a clear indication of intent exposed by scale.

While the second finding was interesting, the agent appeared to have mislabeled the reason behind the first one. It wasn’t card testing, rather these were simply signup events that naturally lacked amounts. The analyst later corrected the agent to help it better understand the context of the data it was interpreting.

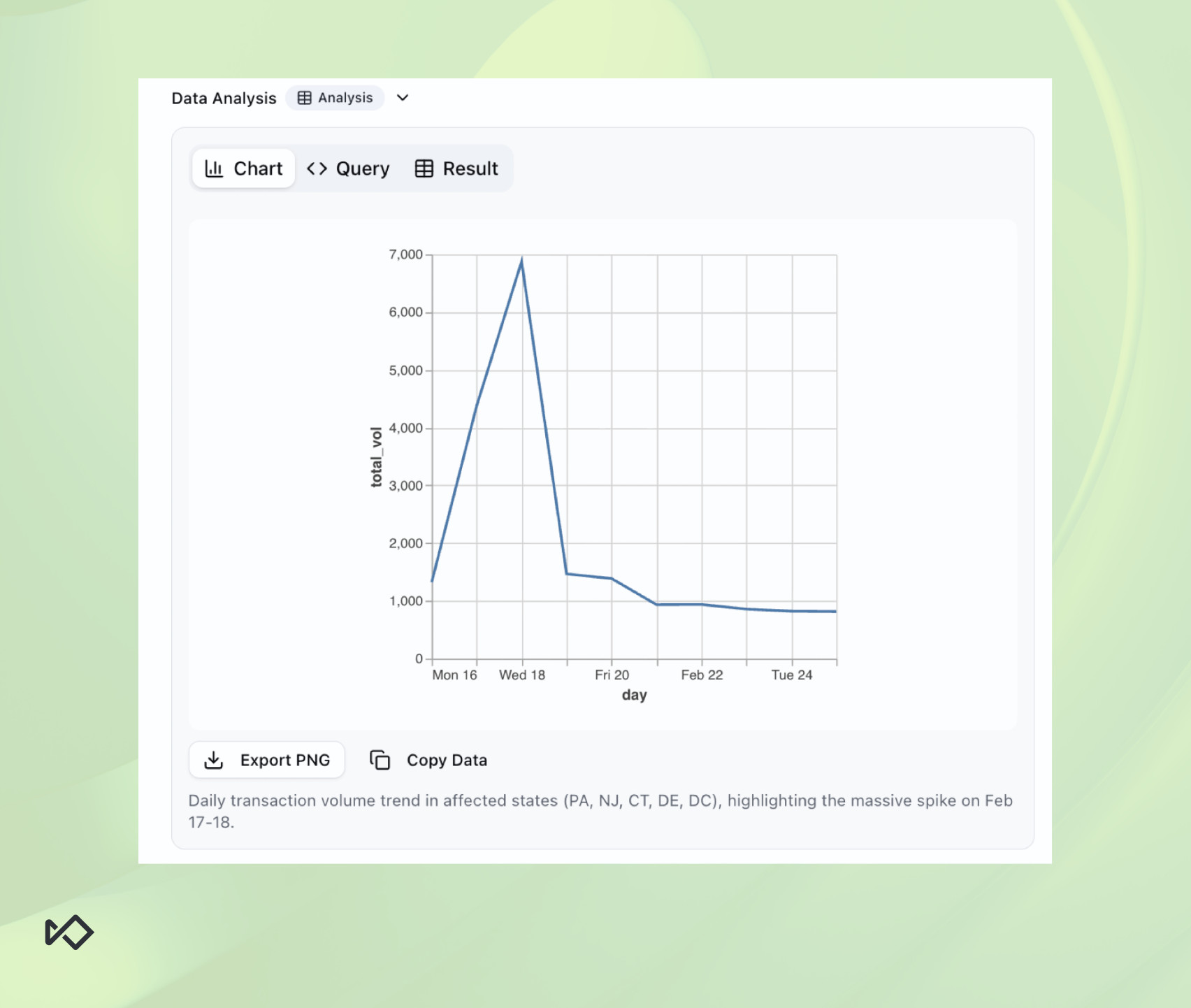

Lastly, the agent also plotted the transactions from the identified affected states on a timeline, making it easier to pinpoint the exact dates of the attack:

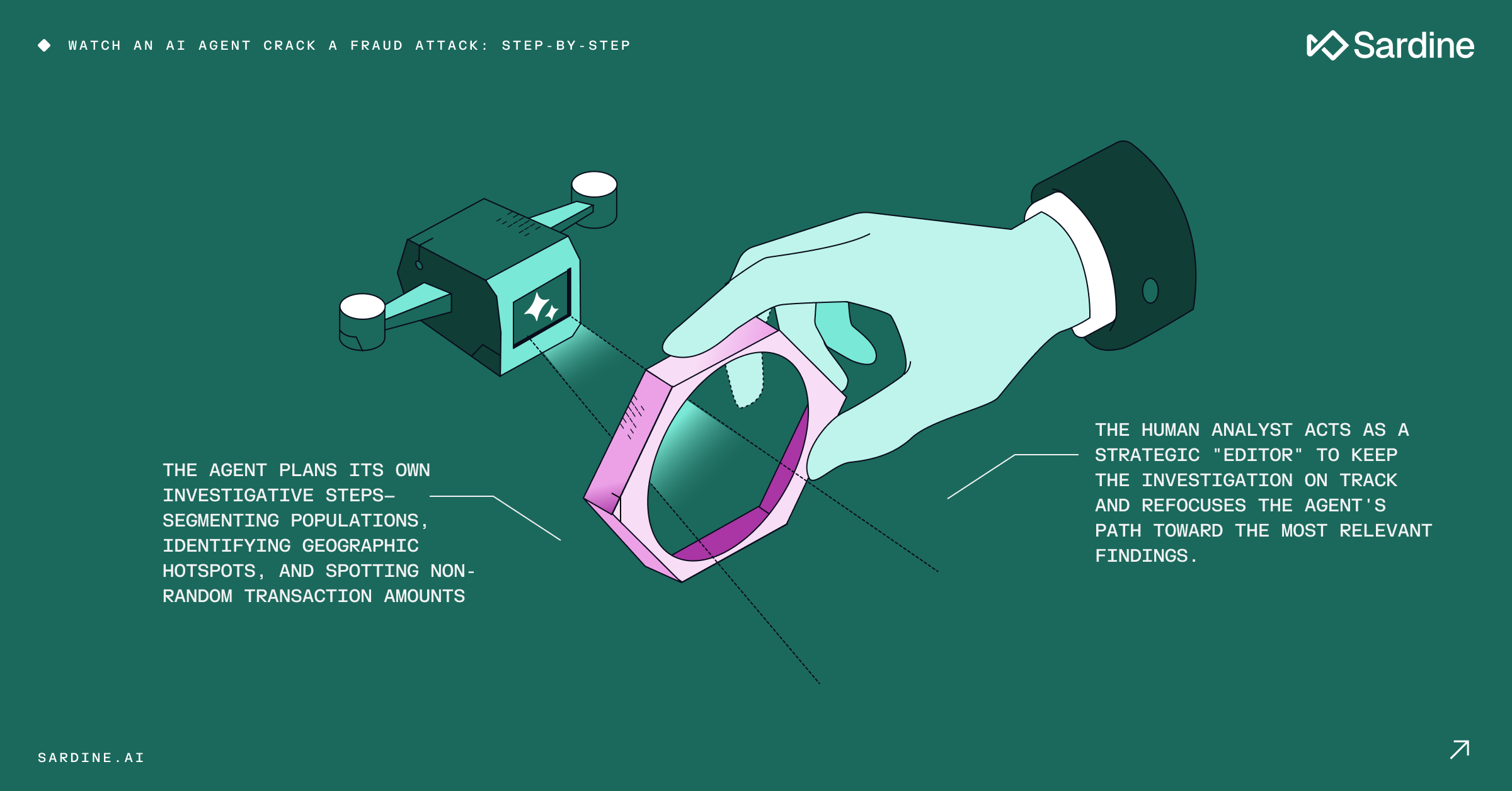

Notice that there weren’t multiple prompts and there was no re-steering of the agent. All of these findings, visuals, and insights were the result of a single prompt. This demonstrates the power of real agentic systems: the ability to reason, plan, and adapt their course of action as they progress through their task.

Unlike a simple chatbot, the Data Analyst Agent functions as a fully autonomous worker, available 24/7. Much like a professional analyst, it produced a clear summary of its findings, ultimately identifying two distinct fraud patterns.

While the summary above is damning on its own, our analyst flagged even deeper concerns. This attack bore striking similarities to the fraud campaign we recently neutralized for another client.

Step three: Pattern analysis on the fraud ring

After confirming an attack was taking place and locating its source, it was time to ask the agent to describe the fraud pattern on a tactical level. We needed to know:- what kind of behavioral signals should we be flagging and stopping?

Once more, even though we had a good idea of what we’ll find out given it’s the same ring, the analyst chose to give minimal instructions to the agent.

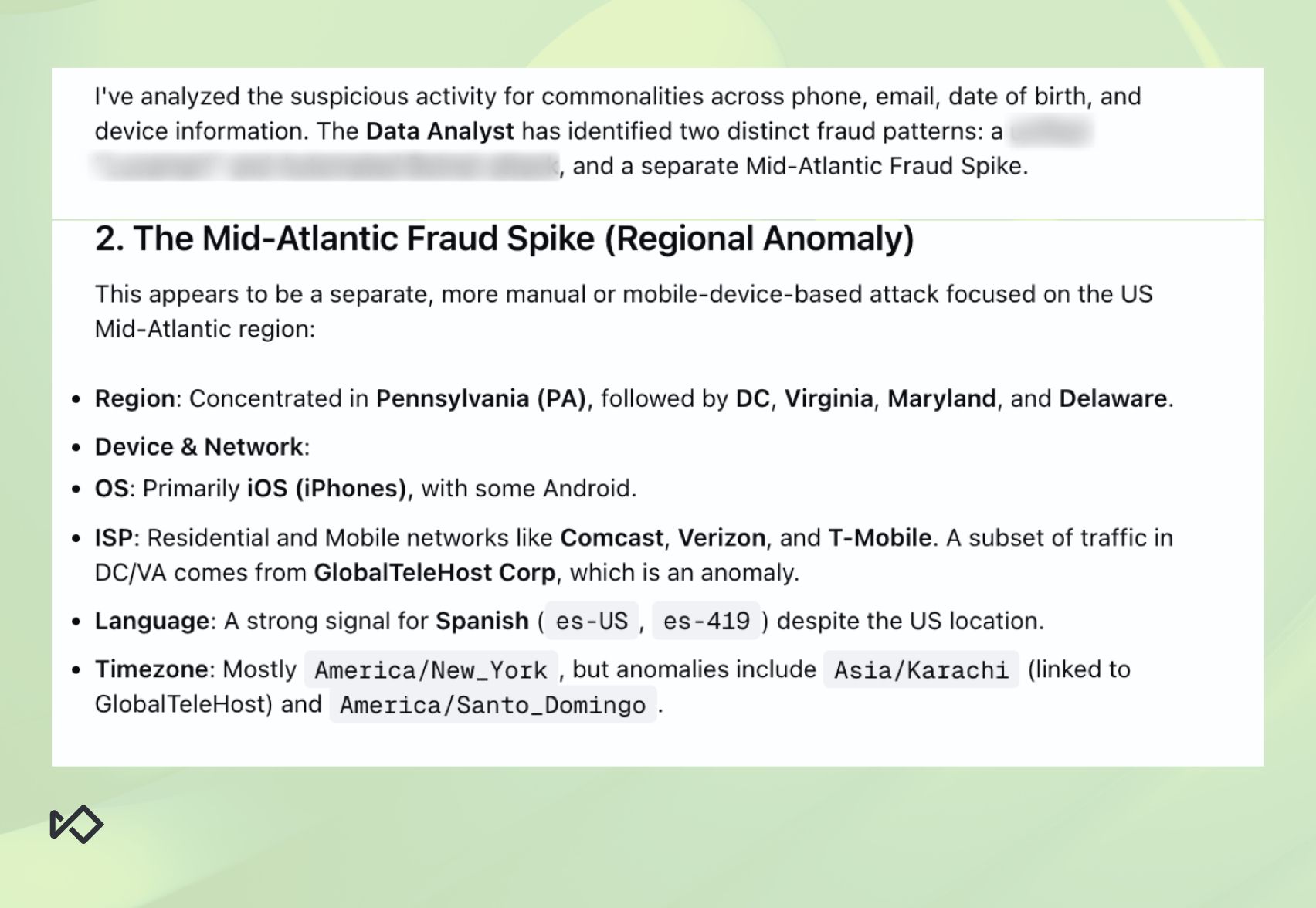

In return, it delivered this summary of its findings:

Note that when the agent looked at the descriptors of the attack, it actually found another suspected fraud pattern within the population.

However, our analyst deemed that other pattern to be small and to a large extent already flagged as high-risk by our system. For that reason, they chose to ignore that part of the agent’s report.

This showcases nicely how human-in-the-loop is the preferred way for working with AI agents. While the agent is proficient in pulling data and making tactical decisions, a human operator is necessary to provide context and guidance.

This combination keeps operations efficient, but also aware of the broader business context.

Investigation recap: what we learned

Investigating this spike took less than 20 minutes from the moment an anomaly was detected. From that point on, it was conducted mostly by the Data Analyst Agent with just three prompts from our analyst.

Once the findings were checked and validated, we contacted our customer and shared with them the agent's summary as well as some rule suggestions that would help put a stop to the attack.

Another day, another dollar (saved).

But as we wrap up yet another frustrated fraud attack, I wanted to share some thoughts about our team’s experience in this case:

Agentic AI can run fraud investigations end-to-end

That might sound trivial, even like something we've heard dozens of times over the last 18 months. But when we talk about investigation, we usually refer to single case investigation.

This one is different. Our investigation wasn't an alert on a specific event. It was investigating a fraud spike at scale.

Even when Soups shared his own experience with the Data Analyst Agent, it wasn’t a full, end-to-end workflow. In this case, the Data Analyst Agent proved it can work with very little data to start with, and with broad guidance only. The role of the human analyst was more to help it defocus threads it shouldn't continue pursuing.

That was a first for us.

This will take some getting used to

Using AI agents to do the work we’re used to doing on our own doesn’t come naturally. Now that fraud analysts can delegate some of their tasks to AI, their daily routine is about to change.

Our team is no exception. We only used the agent for a full investigation the second time around. When we detected the first spike, we used the agent only to uncover the extent of the ring after our analyst had already finished the manual investigation.

It was only during the second spike that we used the agent from the start. Evidently, old habits die hard.

But what about teams that don’t have trained fraud analysts? What about teams that aren't equipped to do end-to-end, data-driven investigations? For them, this shift will be even more impactful.

Chained agentic AI fraud agents: This is only the beginning

Not so long ago, Soups wrote about the concept of chaining agents, the idea being that each AI agent should focus on a narrow context and work together with other agents to form impactful workflows.

Which makes one think: if a Data Analyst Agent can investigate known anomalies, what happens if we chain it after an agent that is specialized in anomaly detection?

When you start thinking about agentic fraud prevention systems in this way, the future of fraud teams becomes very blurry. And that’s the exciting part. We’re building that future NOW.