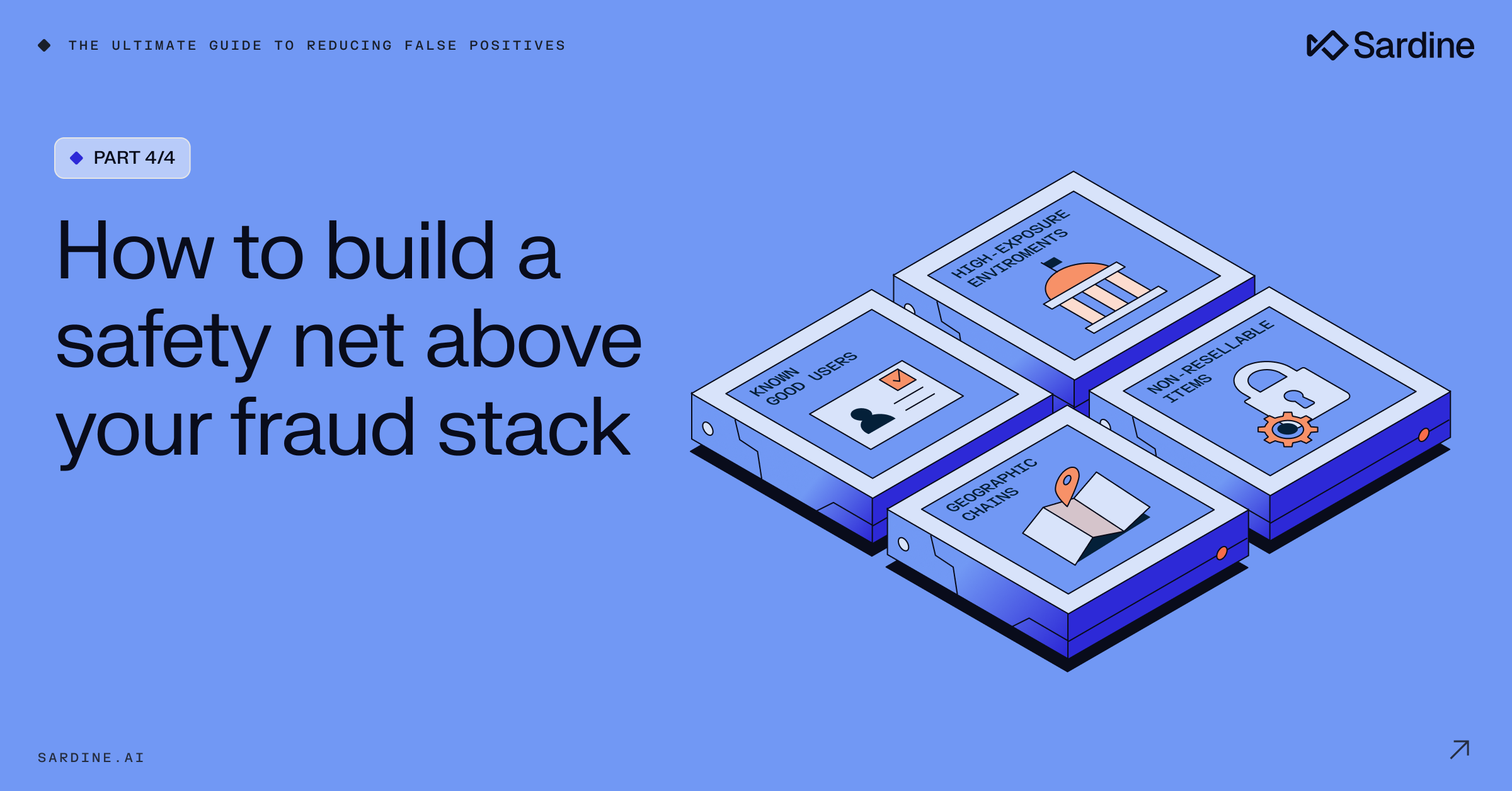

The first three parts of this series dealt with the top-down view of false positives: you measure them, you bucket them, and you fix the parts of your system that misbehave.

But even if you do all of that perfectly, you still face one more problem: Your fraud system is a maze.

You have rules, models, AI agents, manual review queues, partner responses, payment routing decisions, KYC checks, device intelligence, and dozens of other components that are each capable of blocking a user.

Even if each component is “reasonable” on its own, their interactions can still produce false positives in places you never intended. A user might bypass one part of the system only to be caught by another.

This is why mature fraud teams eventually introduce a different mechanism altogether: a high-level override layer that sits on top of the entire stack and prevents the system from harming users you already have reason to trust.

Think of it as the mirror image of a fraud safety net. When any actor in the system, be it a rule, model, agent, or human, tries to block a user, this safety net checks whether there is strong evidence that the user is actually a good one. If so, it reverses the decline.

This idea is extremely powerful when implemented correctly, but it requires thoughtful design.

Let’s walk through how it works.

How to design override systems

There are two questions you must answer before designing any override logic.

The first one touches on the parts of your system that shouldn’t be overridden, and definitely not by default.

Here’s the thing: not all solutions should be treated equally.

If a stolen-card blacklist says a card is compromised, you shouldn’t automatically override that decision just because the IP looks benign. After all, the data intelligence vendor can be wrong.

You don’t have to make it complex, and there’s no need to pair fraud detection logics with false positive detection logics. Just mark the solutions in your system that have near-perfect precision and should never be overridden.

The second thing you need to consider, obviously, is which heuristics are strong enough in detecting false positives?

That is already a high bar to meet, but you are not simply detecting false positives. You are identifying them within a declined population that already carries a significantly higher fraud rate. Your heuristics need to be airtight if you’re going to administer them to such a high-risk segment.

So the question remains: where do you start?

I’d like to suggest four key heuristics, or logic families, that usually prove to be effective at sifting through high-fraud-rate populations and to find false positives in them.

Which of them you implement and how exactly, depends on your specific business context.

Family 1: Known good users

This is the simplest and most intuitive category: users you already know and trust.

These signals might include long-standing accounts with clean behavioral history, or users who passed high-friction verification. And I’m not talking about your generic KYC, but something meaningfully identity-affirming.

“Hold up,” you must be thinking, “this is the most trivial piece of information ever! Surely I didn’t waste my time for…this?!”

The catch is that while flagging established accounts is straightforward, the logic changes entirely when you need to assess new accounts or one-time users. In many cases, these “new” users can be returning users, and if we manage to associate them to their past activity, we might exclude them from high-friction risk mechanisms.

For example, I myself have three PayPal accounts as I lived in three different countries and PayPal requires users to open an account in each new country they move to.

This is not the ideal user journey, but I understand the compliance requirements they need to uphold. At the same time, I also know that whenever I open a new account, they immediately link it to my previous ones.

How? Simply because I’m using the same device to open a new account with the same name of an account I already own. That’s me.

The key insight is that trust can be inferred, not just collected. You don’t have to wait for someone to build a long history on one account. If they are the same human behind multiple accounts, and you’ve verified the legitimacy of one of them, the new one inherits a portion of that trust.

Now, using the same device and name to open a new account is not exactly a ground-breaking heuristic (even though not all teams have even that in place). But you can easily find similar logics that don’t rely on the same device and are still as strong.

Here are some examples:

- Card + IP address

- Email + same item bought

- Last name + exact geo location (yes, you can also infer across family members!)

Just remember, it’s not about finding random links. It’s about linking a new event to an airtight, proven, good event.

Family 2: High-exposure environments

Fraudsters avoid environments where their real identity or real affiliations could be exposed.

Legitimate users, on the other hand, often operate from those environments naturally.

This leads to a surprisingly powerful heuristic: if a user transacts from a highly controlled or highly traceable network environment, the likelihood they are a fraudster drops dramatically.

Examples include:

- Corporate, government, and military IP networks with strict access control, where the user is likely an employee.

- University IP networks, where the user is either on staff or a student.

- Large non-profit organizations (.org) IP networks, where the user is likely an employee.

Committing fraud from such a network is highly unlikely as they are monitored for security breaches, and can lead back to individual users with relative ease.

Similarly, sending packages to a highly controlled shipping address (a military base for example), is another indicator the user is highly unlikely to be a fraudster.

Keep in mind, it’s not only about identifying and prosecuting the fraudster. Most of them don’t fear that. But it is about the fear of being sanctioned by their own employer that would deter them from utilizing these assets to commit fraud.

Family 3: Geographic chains

Geographic mismatches are one of the most common sources of false positives. We talked about this in Part 3 with the U.S.-Canada example. But we can also use it to identify false positives.

Specifically I’d like to point to two types of geo-chains:

1. Geographic proximity in highly specific regions

If an IP address resolves to a location extremely close to the legitimate billing address, that on itself is not necessarily a redeeming indicator.

For example, if the address is located in NYC, finding an open proxy IP that is less than 10 miles away is probably not so hard.

But what if the address is in a small town or rural area ? When you get a strong match to the IP location (<10 miles), this can be a stronger good-user indicator than most people realize. Fraudsters can spoof large cities easily. They cannot spoof obscure villages with the same ease.

2. Nationality-consistent mismatch (traveler)

Again, it’s easy to use examples from my own personal experience: when I use my Spanish card in Israel, I often get blocked. Why? Because of the ES BIN <> IL IP mismatch.

But this shouldn’t be the case. Analyzing my name should mark it as Israeli (the technology exists), and matching it to the Israeli IP should be easy. That’s not to say that when a name shares the same nationality as the IP address, it means it’s a good event.

However, when we see a very specific pattern where the card mismatches the IP but the name matches the IP, we find a correlation that is too obscure for a fraudster to mimic.

When a fraudster sees a Spanish card? They simply go for a Spanish IP. There’s no reason for them to over-engineer it.

This is subtle and contextual. But when the logic is vetted properly, it can overturn a surprising number of geo-based false positives while introducing minimal additional fraud risk.

Family 4: Non-resellable or low-risk items

This final heuristic applies mainly to transactional businesses.

Fraudsters make money by reselling what they steal. Their economic model depends on transferring the stolen goods, either digital or physical, to someone else. If the item being purchased has no resale market, or only a very weak one, then declining it for “fraud risk” is often counterproductive.

This can look like:

- Educational goods and services

- Niche or hyper-personalized items

- Services that require personal consumption

These transactions should not be treated with the same suspicion as universally fungible goods (electronics, gift cards, digital currency, etc.). In a well-designed override system, “low resale value” can act as a brake that prevents your system from wasting its energy on things fraudsters don’t want in the first place.

Family 1: Known good users | Family 2: High-exposure environments | Family 3: Geographic chains | Family 4: Low-risk items | |

Core signal | User is linked to a previously verified legitimate identity | User transacts from a traceable, monitored network | Geographic data tells a coherent "traveler" or "local" story | Item has no meaningful resale market |

Example signals | Same device + name across accounts, card + IP match to prior good event, family member linkage | Corporate, government, or military IPs; university networks; controlled shipping addresses | Rural IP-to-billing proximity (<10 mi); name nationality matches IP but not card (traveler pattern) | Educational services, hyper-personalized items, personal consumption services |

Why it works | Trust can be inferred, not just collected — the same human has already been validated elsewhere | Fraudsters avoid environments that expose their real identity or lead back to an employer | These patterns are too obscure or contextual for fraudsters to engineer — they take the simpler path | Fraudsters need resale value; no resale market means no economic incentive to steal |

Watch out for | Weak linkage signals that fraudsters could mimic (e.g., email alone isn't enough) | VPNs that spoof corporate networks; shared institutional IPs | Logic only holds in specific geo contexts — must be vetted per market | Some "niche" items still have resale value in secondary markets |

Best for | Businesses with returning users, multi-account flows, or cross-device journeys | Businesses with B2B traffic or users in institutional settings | Businesses with international user bases and geo-mismatch rules | E-commerce and digital goods businesses |

How to deploy override logic safely

Let’s make it simple: you do not turn on override logic for 100% of your traffic on day one. You test it. Robustly.

A typical rollout might look like this:

- Deploy the override rule in shadow mode only.

- Measure the hits and compare them to your predictive analysis. Is it hitting according to your expectations?

- Lower the shadow-mode rule to 80% of the population and introduce a challenger rule that actually overrides declines for the rest of the 20% (treat these numbers as examples, decide on how you split the population based on your own risk appetite).

- Watch the results over 30-60 days, this should show you how fast fraud matures in the undeclined population.

- Looks good? Ramp-up to 50% and repeat. Unclear? Wait another 30 days. Fraud Spikes? Back to 100% shadow mode (and the drawing board).

- Increase gradually until you reach full deployment.

One thing that you might find out throughout this cautious ramp-up, is that the fraud you do see penetrating these rules can come from a handful of fraud detection solutions.

This is pretty common, and in that case you should move these fraud solutions to the tier that doesn’t get overridden. When you develop exclusion logics to exclusion logics, you know you’re leading the pack.

What you should have by now

You now have a complete framework for reducing false positives:

- In part 1, you learned how to measure false positives despite your system hiding them.

- In part 2, we walked through how to bucket them and decide which ones you can actually influence.

- In part 3, you learned how to fix the misbehaving parts of your fraud stack through rule refinement, threshold adjustments, and data-quality troubleshooting.

- And now, you have a system-level override mechanism that prevents good users from being harmed even when a local piece of logic misfires.

If you put all four pieces together, you go from:

“We think we have some false positives somewhere…”

…to:

“We know exactly where our false positives come from, how to measure them, how to reduce them in the underlying system, and how to protect ourselves from them at the architectural level.”

This is what a high-performing fraud organization looks like. It’s not just about catching fraud. It’s about enabling your business to grow safely.

And that is where the real upside lives.