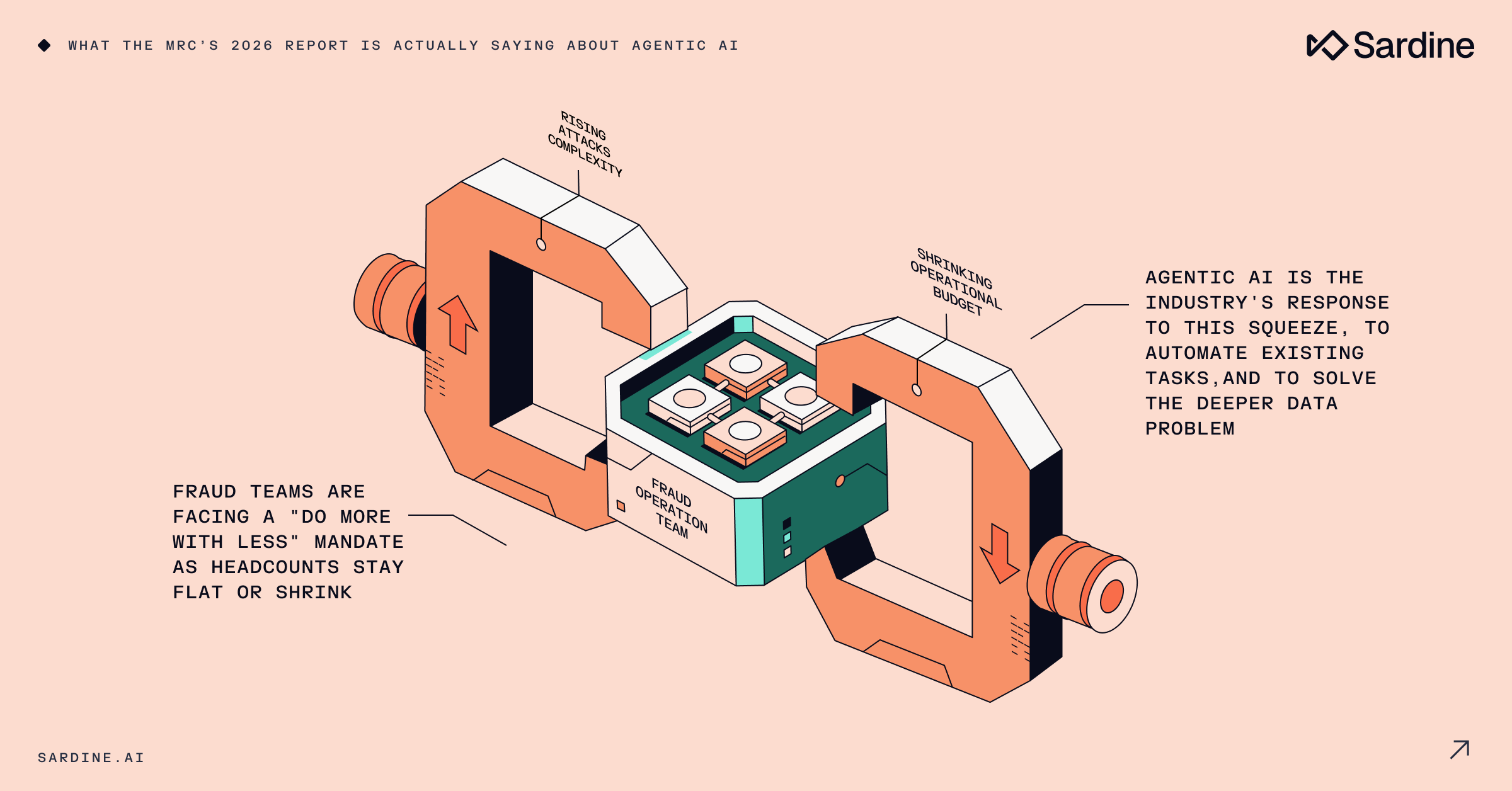

Fraud teams are being squeezed from both sides.

Attack volumes remain stubbornly high while complexity is only ramping up. And yet, despite this relentless pressure, fraud professionals across the globe are bracing for smaller budgets, fewer hires, and mounting demand to do more with less.

This is the picture that emerges from the MRC’s 2026 Global eCommerce Payments & Fraud Report, its 27th edition, co-sponsored by Visa Acceptance Solutions, CyberSource, Verifi, and conducted by the market research firm B2B International.

Over 1,200 eCommerce merchants across 37 countries were surveyed in late 2025. It’s one of the most comprehensive annual snapshots of the fraud ops landscape, and if you haven’t read it yet, you should.

Naturally, my attention was almost entirely dedicated to a specific section: “Fraud Management Strategies & Challenges.” Its summary has two parts that, when read together, tell a story the report itself never quite finishes.

That story is about agentic AI, though not in the way the industry usually frames it.

The budget has spoken

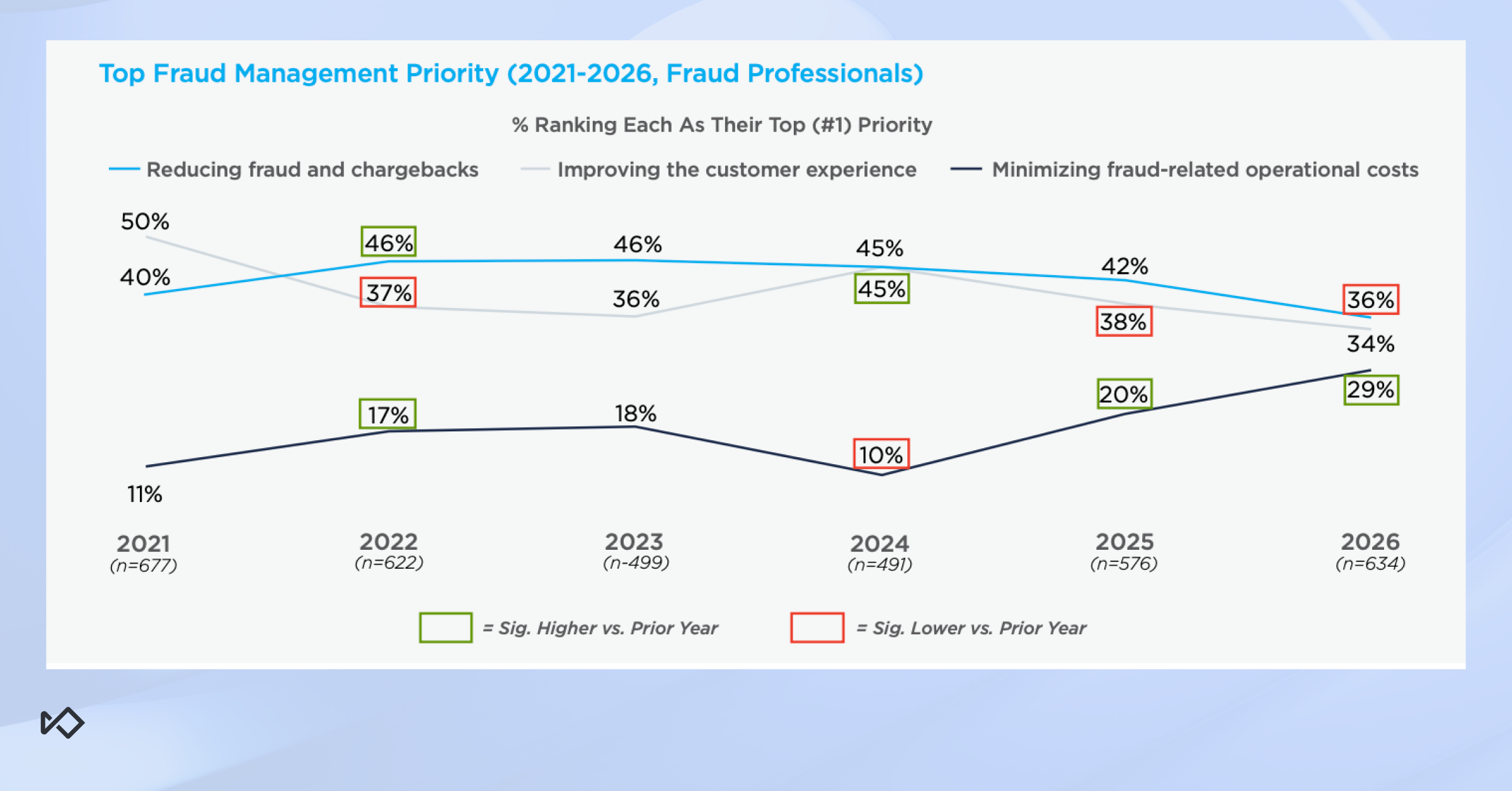

Let’s start with the number that stopped me.

In 2024, 10% of merchants cited minimizing operational costs as their top priority in fraud management. But in 2026, just two years later, that number has nearly tripled to 29%.

For the first time in the MRC’s research history, all three core priorities have converged: reducing fraud and chargebacks, improving customer experience, and minimizing operational costs now rank as the top priority by roughly equal shares of merchants.

This is a massive shift. Cost minimization was a distant third for years.

So what changed?

The easy answer is macroeconomics. Early 2026 hasn’t been a banner year for the economy. But I’d push back on that framing. Nothing about the current environment is dramatically worse than 2023 or 2024.

The more honest reading is that something structural has shifted. Boards and executive teams have watched agentic AI transform customer support over the past two years by significantly reducing headcount while maintaining output. The expectation is now pervasive: if AI can do that for customer support, it can do it for fraud ops too. That expectation is already baked into the headcount decisions being made right now.

The data confirms this: 52% of merchants expect spending on fraud management staff and talent to stay flat or decrease over the next two years. Nearly as many (44%) say the same about their spend on tools and technologies.

Altogether, these data points paint a stark picture of fraud management professionals being forced to manage a growing threat with a shrinking footprint.

And it’s unlikely to reverse in the future.

We’re not going back to the fraud ops headcount levels of 2022 or 2023.

The question isn’t whether fraud teams will stay small. It’s how are they expected to do their jobs?

The obvious answer is adopting agentic AI to fill-in those empty seats and allow smaller teams to do more.

Is it the report’s conclusion as well? Not directly, but it also doesn’t leave the reader with other options or takeaways. And after all, in today’s environment agentic AI is the default answer to everything.

The data problem is the real story

Now here’s the part of the report I find more interesting:

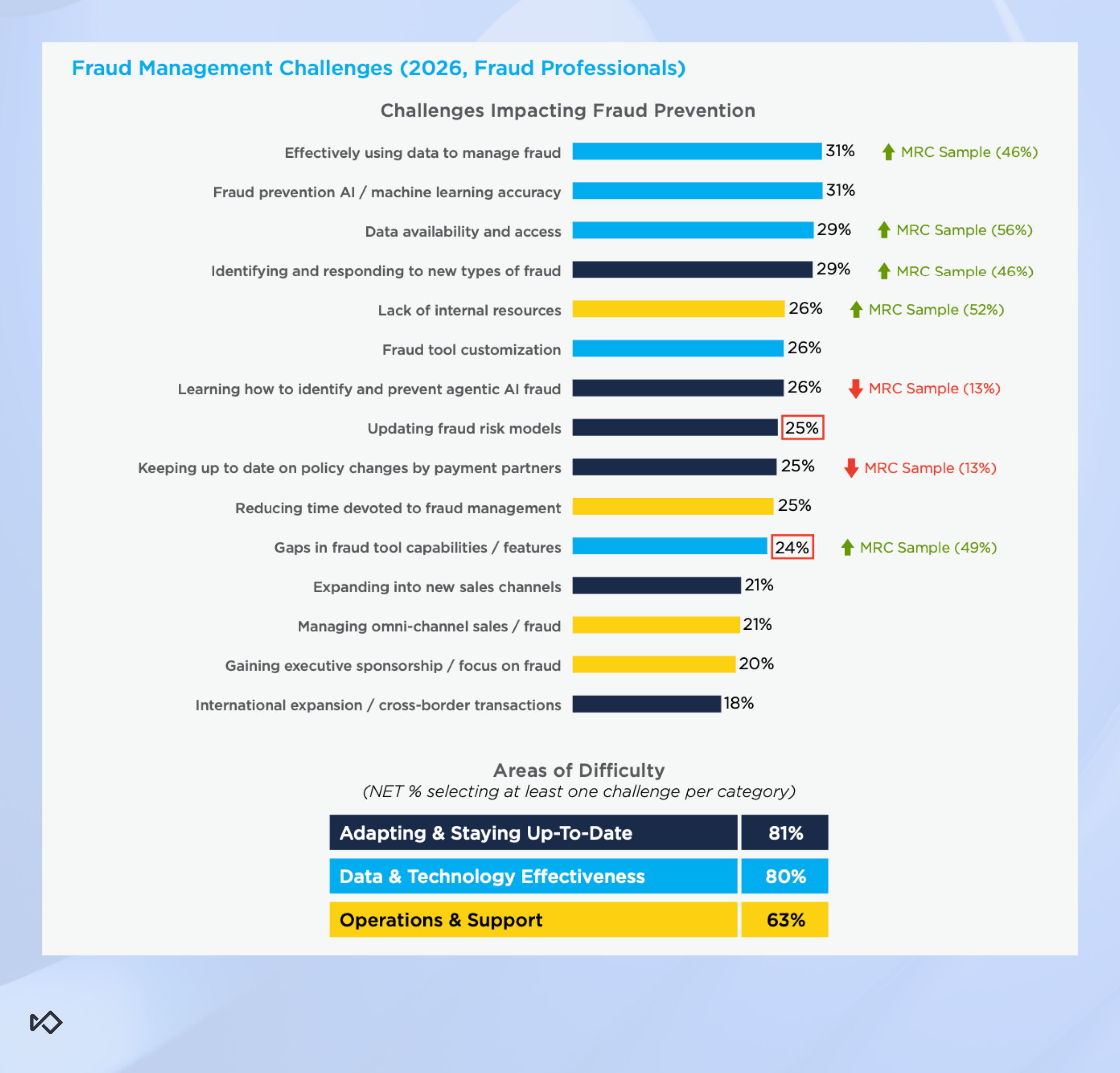

While cost pressure is the immediate hurdle, the biggest frustration for fraud leaders isn’t actually money. And this is where the agentic AI story gets more nuanced and, I’d argue, more compelling.

When presented with 15 different fraud management challenges, merchants in 2025 cited data and technology issues as four of the top six. In total, 80% say they’re struggling with at least one data and tech challenge.

Breaking it down, the top four challenges cited by merchants are:

- Effectively using data to manage fraud - 31%

- Fraud prevention AI / ML accuracy - 31%

- Data availability and access - 29%

- Identifying and responding to new types of fraud - 29%

These look like four distinct problems, but they are actually the same issue viewed from different angles.

Look at the number one challenge: effectively using data. Not accessing it (that’s third on the list), using it. There’s a big difference.

Most fraud teams have tools that collect data. Very few have the analytical infrastructure and talent to turn that data into real-time decisions, investigative leads, or rule updates at any meaningful speed.

The data exists, but the ability to act on it is missing.

The ML accuracy challenge tells a similar story. Fraud prevention ML models haven’t improved substantially over the past decade, and one can argue they’ve even reached a plateau. So when teams wish for better accuracy, I myself remain skeptical that it’ll actually materialize.

But the more interesting question is why teams rate it so high, given that it’s likely that they also don’t hold high hopes.

In my mind the explanation is simple: because it solves the very same problem we just discussed. If your models automatically solve fraud for you, you don’t need to analyze data to do it yourself.

So what they actually need isn’t a better model. It’s better input signals and smarter R&D processes to identify what the model is missing and generate rules that cover the gaps.

“Identifying and responding to new fraud types” is just a symptom of the first two problems. If your data pipeline is fragmented and your team is already stretched, staying ahead of emerging patterns is close to impossible.

By the time a human analyst identifies a new attack, builds a hypothesis, writes a rule, gets it reviewed, and gets it deployed, the attack has already scaled.

Here’s the connection the report implies but never explicitly makes: agentic AI is a single coherent response to all four of these challenges. But only if used correctly.

The temptation is to use agentic AI to automate what you’re already doing, just like with fraud ops.

That’s a reasonable first step. It saves time and reduces manual burden. But it’s not where the real value lies.

The real value is in “net-new” capabilities: things your team isn’t doing today because they lack the people, the skill set, or the bandwidth to do them at all.

Here’s a concrete example of what “net new” looks like in fraud prevention:

1. An AI agent flags an anomaly in transaction patterns.

2. A second agent reviews that anomaly like a human would, labels each suspected case, and characterizes the shape of the attack.

3. A third agent writes a rule to block it and prepares it for deployment review.

All of this happens without a fraud analyst, without a backlog ticket, and without a sprint cycle.

But that workflow doesn’t exist in many fraud teams today even with humans doing the work.

Running it requires a combination of analytical skill, fraud domain expertise, and operational speed that human teams can’t realistically sustain, especially in an environment that demands lower costs and headcount.

Agentic AI doesn’t just make that workflow faster. It makes it possible.

Under the surface, this is exactly what the four MRC challenges are describing.

Teams can’t effectively use their data because doing so requires skills and bandwidth they don’t have. ML accuracy has plateaued, so the next improvement won’t come from the model, it’ll come from better R&D processes.

Identifying new fraud types requires near-real-time analysis at scale, not teams that are stretched so thin they can barely manage basic operations.

The unified answer to all these concerns? An agentic AI deployed on a platform with access to the right data.

What Sardine’s agents are built for

We’ve covered specific use cases in more detail in this blog, so I won’t rehash everything here. But there’s one point worth making directly in the context of what the MRC report surfaces.

The fraud platform challenge isn’t just workflow automation, it’s also data access. An agent is only as useful as the information it can draw on.

An agent that can flag an anomaly but can’t cross-reference it against network-level fraud signals, device behavior, and historical transaction patterns is limited in what it can actually conclude.

What makes Sardine’s agents different is that they operate inside the platform. They have native access to the full data stack: device intelligence, true IP detection, behavioral signals, network graph data, and cross-merchant fraud signals.

An agent running on top of a generic LLM wrapper has to import data, normalize it, and work within whatever context you’ve manually fed it.

An agent running inside Sardine’s platform already knows what happened, why it happened, and what analogous events looked like across the network.

This matters directly for the challenges the report surfaces. “Effectively using data” is not solved by connecting an agent to your data warehouse.

It’s solved by agents that understand fraud context and have access to the enriched fraud data itself, be it device fingerprint, IP masking behavior, or velocity patterns.

But I also want to be direct about the limits here.

Agentic AI won’t fix a broken fraud stack. If your data is fragmented, your tooling is disconnected across multiple vendors, and your team hasn’t established clear investigative SOPs, agents will just inherit those problems. Garbage in, garbage out applies here as much as anywhere.

The MRC data shows 43% of merchants are prioritizing fraud orchestration over the next year. That’s not coincidental.

Orchestration is the prerequisite. Automation without orchestration just speeds up the chaos.

There are also real failure modes to name. We’ve written about the three most common on the same blog article above: hallucinated conclusions, over-suspicious escalation, and outputs that can’t be defended to compliance or operations teams.

These are real risks. The way to mitigate them is using dedicated agents for narrow, specific tasks rather than general-purpose agents trying to run end-to-end investigations.

Agentic AI rewards teams who’ve done the foundational work setting up clean data, coherent systems, and clear processes. For teams that haven’t, it’s likely to surface those problems faster than it solves them.

The bottom line

The MRC’s 2026 report is a snapshot of an industry at an inflection point.

Budgets are tighter, teams are smaller, and fraud is more complex than ever before.

And while agentic AI is the source of much of the downward pressure on teams to cut costs, it is the likely the only viable solution just as well.

But here’s the thing: the teams that use it to automate their existing workflows will only see incremental gains. That’s worth doing, don’t get me wrong, but it’s not the ceiling.

The teams that use AI to build capabilities they’ve never had before, such as automated fraud R&D and real-time pattern identification, are the ones who will actually change the equation.

And so the real question isn’t whether your team should adopt agentic AI. It’s what you’re going to build with it that you couldn’t build before.