If you're a fraud or compliance leader, the regulatory landscape right now feels like it's moving in two directions at once.

The U.S. is signaling a shift toward outcomes-based expectations rather than rigid, prescriptive rules. At the same time, the EU is doubling down on detailed, structured requirements under the AI Act. And despite their differences, both are converging on the same underlying demand: programs need to be more effective, more dynamic, and more demonstrably capable of managing the risks they face.

Here's what that actually means for how you build and fund your programs in 2026.

The U.S.: Less prescription, higher expectations

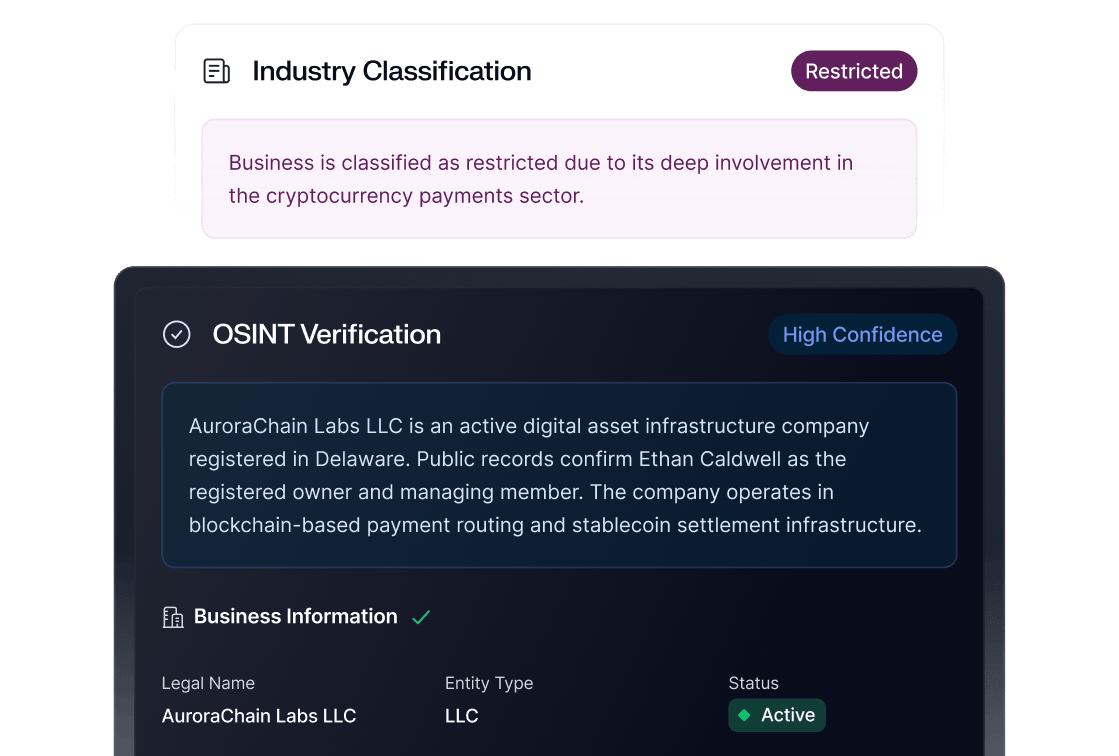

FinCEN's recent notice of proposed rulemaking signals some notable shifts in how the U.S. regulatory apparatus is thinking about AML and fraud prevention.

One significant change involves the potential requirement for risk assessments across all reporting entities, not just certain categories. The expectation is also moving away from annual refreshes to updates made promptly when the risk landscape changes. As I shared during our recent Fraud and AML Trends webinar, this fundamentally changes the tempo of compliance program management. Static, annual-cycle approaches won't meet the bar.

The proposed rules also explicitly call out the use of non-traditional risk signals, such as device data, phone and email intelligence, and IP-related signals as legitimate inputs to customer risk assessment. This is a meaningful nod toward the types of fraud-adjacent signals that compliance teams have historically treated as outside their domain.

Perhaps most interesting: regulators are showing strong enthusiasm for consortium-level data sharing. This could eventually involve cooperation between merchants and financial services rather than evaluating risk in isolation. Significant movement on this front is expected over the next 12 months.

There's also an intriguing signal on model risk management. The OCC appears to be leaning toward viewing traditional model risk management frameworks as potentially overburdensome for AML and fraud applications. This suggests that the current approach may be creating barriers to AI adoption rather than ensuring safety. While this isn't fully fleshed out yet, it’s worth watching closely.

The EU: Structure and specificity under the AI Act

The EU is taking a different method to reach a similar intent. Under the AI Act, AML and compliance solutions that leverage AI are broadly classified as high-risk. This classification triggers a more demanding set of requirements around documentation, testing, human oversight, and transparency.

For institutions operating in or serving EU markets, this means a higher bar for deploying AI in compliance workflows. The requirements are strict, but the underlying question is key: does this structure actually help build solutions that are more effective and scalable in the long run, or does it create friction that slows adoption?

While both the U.S. and EU are pursuing clarity and efficiency, they're arriving there from different starting points. U.S. regulators are removing prescriptive rules and trusting institutions to innovate. EU regulators are providing detailed guardrails and expecting institutions to operate within them.

Both expect you to be able to explain and defend your decisions.

The explainability imperative

Regardless of jurisdiction, one theme is non-negotiable: explainability.

If you can't explain a decision to an examiner, such as why a transaction was approved, or why a particular risk score was assigned, the quality of your model is irrelevant. This applies to machine learning models, rules-based systems, and, increasingly, any AI-driven automation in your fraud or compliance stack.

During our webinar, Matt Vega, Sardine's Chief Fraud Strategist, raised an important nuance regarding governance: much of the current regulatory discussion focuses on traditional machine learning, where explainability and transparency are well-understood challenges. However, the emerging frontiers of generative and agentic AI introduce the distinct problem of hallucination. And the regulatory frameworks haven't caught up to that distinction yet.

For fraud fighters, this creates an asymmetry.

On the attacker side, hallucination in AI models is almost a feature, creating unpredictable variations that serve as curveballs against detection systems.

On the defense side, hallucination is never acceptable. This means the standard for deploying AI defensively is inherently higher than the standard attackers face, and your governance frameworks need to account for that.

What to build for

The regulatory direction, taken as a whole, points toward several practical priorities in 2026.

To stay ahead of these shifts, prioritize making your risk assessments dynamic. This requires data pipelines and model monitoring that can be updated “promptly”, as suggested by U.S. regulators. By building for high-frequency updates rather than annual cycles, you can ensure your infrastructure responds immediately to shifts in the risk landscape.

This isn’t a new revelation, but success in this landscape requires you to break down the silos between fraud and AML prevention. Regulators increasingly view these as a single risk surface and expect your institution to provide:

- Shared visibility across departments.

- Unified data sets for risk scoring.

- Coordinated decision-making processes.

Investing in explainability infrastructure now will prevent friction during future exams. Every automated decision in your fraud and compliance stack should follow documented workflows with clear thresholds and escalation paths. This type of documentation remains critical and provides the audit trail examiners now expect.

Finally, focus on building flexible systems. Regulatory guidance is still evolving, and platforms that can adapt to new rules and reporting requirements will be significantly more cost-effective than bespoke point solutions.

The regulatory signal from both sides of the Atlantic is that AI is not just permitted but expected for fraud and AML teams. Now is the time to lean into AI adoption. FinCEN is openly asking what a world looks like where 100% of activity is reviewed, not just anomalies. If you can figure out responsible, auditable AI deployment earliest, you will have a significant advantage in both risk management and regulatory standing in the coming years.