Why Leaders Choose Worse Fraud Tools

In this episode, I start with a slightly strange moment at the Mastercard offices. I was catching up with someone I know and he told me I had started pushing a new narrative.

Okay. Apparently, the narrative was that rules are better than AI.

Honestly, I get why it looked that way. I talk about rules vs AI in fraud prevention quite a bit. But that is not really the point.

The point is control.

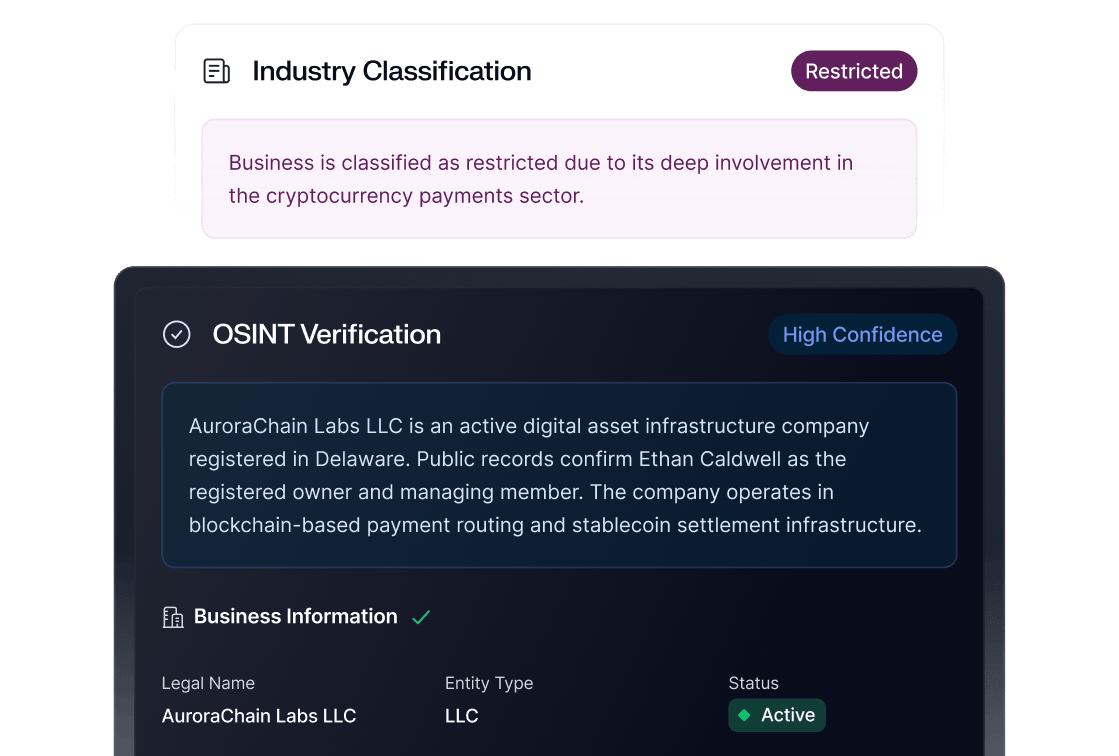

AI fraud prevention, fraud prevention AI, AI fraud detection, machine learning fraud prevention, all of it sounds great until the person responsible for money movement and customer acquisition has to approve the change. Then accuracy is not the only thing that matters. Trust matters. Explainability matters. Strategy visibility matters. And if leaders do not feel in control, they will choose worse fraud tools.

Not because they are irrational.

Because breaking the business is, technically speaking, not a good look.

What you’ll hear in this episode:

- A breakdown of why the “rules vs AI in fraud prevention” debate misses the bigger issue

- Why leaders often choose fraud detection rules over stronger AI fraud tools

- How fraud risk management changes when the process touches money movement and customer acquisition

- Why fraud decisioning depends on trust, not just model accuracy

- What fraud AI tools often get wrong about explainability

- How chargeback rate optimization can become more useful when users can compare low, medium, and high-risk strategies

- Why AI trust in fraud prevention depends on clear KPIs, plain answers, and visible tradeoffs

- Listeners can expect a conversation that moves from “which tool performs better?” to the more uncomfortable question: who actually feels safe enough to make the decision?

Who should listen:

- Fraud leaders and fraud operators

- Risk and compliance teams

- Product teams building fraud AI tools

- Financial institution leaders evaluating AI fraud prevention

- Fraud technology vendors and solution architects

Anyone involved in fraud decisioning, chargeback rate optimization, or machine learning fraud prevention

Basically, if you have ever looked at a model and thought, “The performance is better, so why won’t they use it?” this one is for you.

Episode notes:

This episode is for anyone who has ever watched a “better” fraud tool lose to a simpler one and thought, wait, how did that happen?

I’m unpacking why AI fraud prevention does not win on accuracy alone. In fraud decisioning, control is part of the product. If leaders cannot see the strategy, understand the KPI tradeoffs, or explain what happens when something goes wrong, they are going to choose the tool that feels safer.

Even if it is worse.

We’ll get into why fraud detection rules still have so much staying power, why machine learning fraud prevention often feels harder to trust than it should, and what fraud AI tools need to show users before anyone is comfortable handing over decisions tied to money movement, customer acquisition, and chargeback rate optimization.

No dramatic anti-AI rant.

Just a practical look at why AI trust in fraud prevention is harder than vendors want it to be, and why the path forward probably starts with simpler strategy visibility, clearer KPIs, and fewer dashboards that look like they were designed to impress a conference audience.

Plain answers people can actually use.

Key takeaways:

The AI model may be stronger. The machine learning fraud prevention strategy may perform better. The optimization may be real.

But if the person making the decision does not feel in control, none of that matters very much.

So maybe the better question is not, “How do we prove AI is more accurate than rules?”

Maybe the better question is, “How do we make AI fraud prevention feel safe enough to use?”

Less exciting. Probably more useful.